I’m tired of pretending Galaxy AI matters It's not worth all the attention. https://www. androidauthority.com/im-tired- pretending-galaxy-ai-matters-3665546/ #

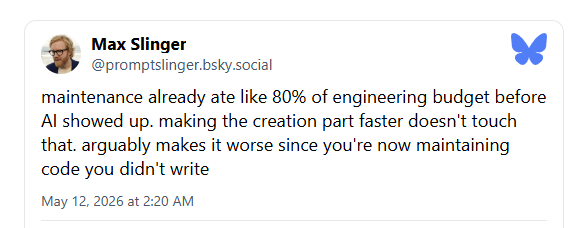

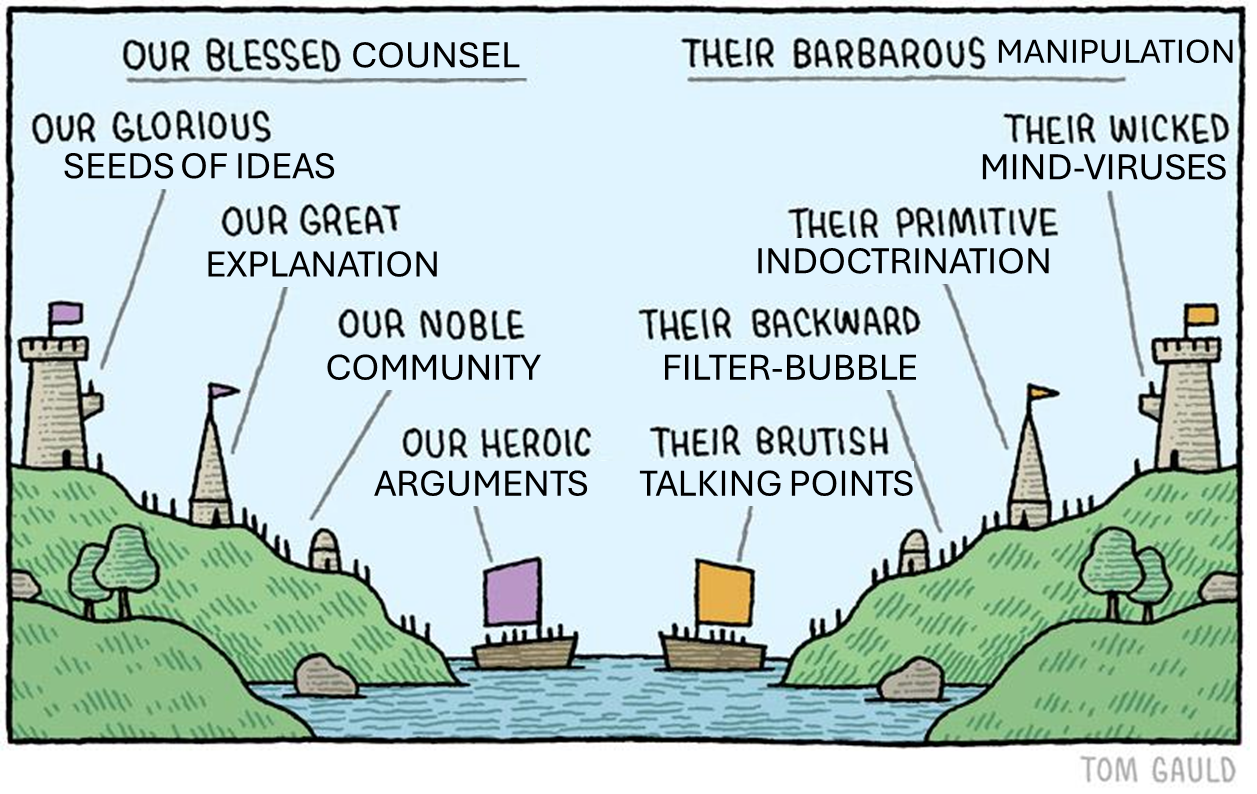

The author expresses skepticism about the significance of Samsung's Galaxy AI features, arguing they are not innovative enough to warrant the attention they receive. The piece suggests that current AI integrations in smartphones are largely superficial and lack true groundbreaking capabilities. It implies that the focus on these features distracts from more substantial technological advancements. AI

IMPACT Suggests current smartphone AI integrations are superficial and lack true innovation.