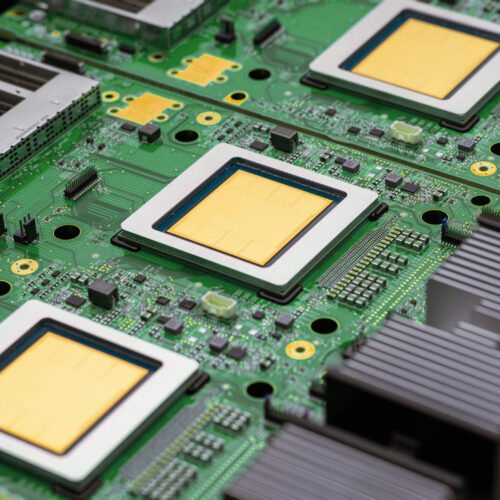

Google has introduced its eighth-generation Tensor Processing Units (TPUs), the TPU 8t for training and TPU 8i for inference, aimed at accelerating the development of AI agents. The TPU 8t is designed to drastically reduce training times for large AI models, with pods housing up to 9,600 chips and offering significantly more compute power than previous generations. The TPU 8i focuses on efficient inference, supporting larger pods and improved on-chip memory to handle models with longer context windows. AI

Summary written by None from 1 source. How we write summaries →

IMPACT Accelerates AI agent development and potentially lowers inference costs for large models.

RANK_REASON Launch of new AI-specific hardware by a major tech company.