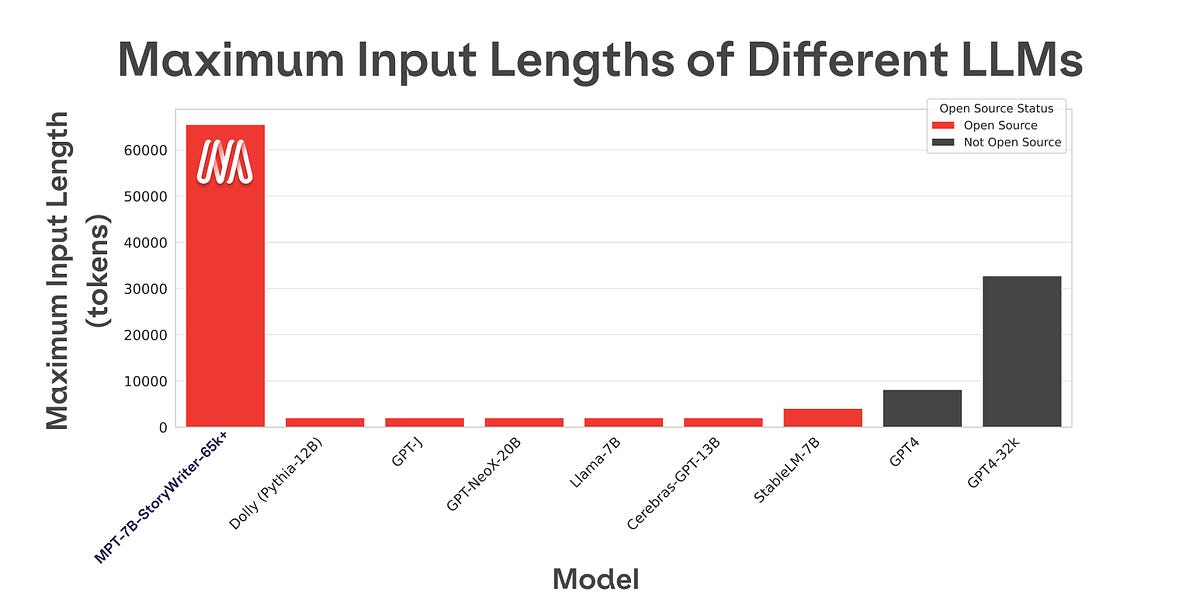

MosaicML has released MPT-7B, an open-source transformer model trained on one trillion tokens that matches LLaMA-7B's quality and is commercially licensed. This model boasts an impressive context length of up to 84,000 tokens, significantly exceeding limitations found in models like GPT-3. MosaicML also open-sourced its LLM Foundry codebase used for training and evaluation, alongside three fine-tuned versions of MPT-7B, including one specialized for long-form storytelling. AI

Summary written by gemini-2.5-flash-lite from 1 source. How we write summaries →

RANK_REASON Release of an open-source model with significant context length improvements and commercially viable licensing.