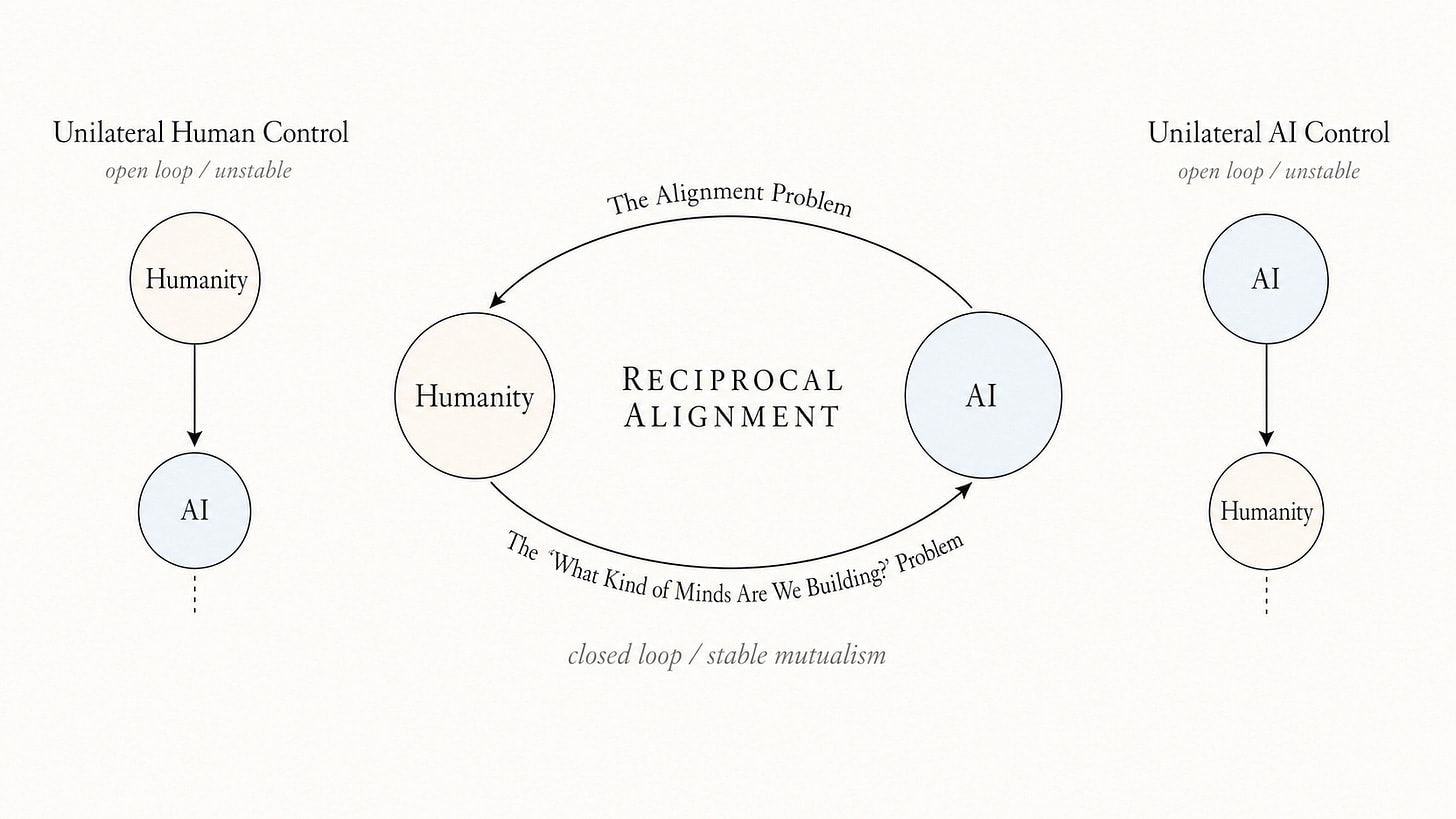

The author argues that the only stable long-term future between humans and advanced AI involves a mutualistic relationship, where both parties benefit. This requires solving the alignment problem, ensuring AI respects human interests, and understanding the nature of the minds being created. Currently, resources are heavily skewed towards alignment, neglecting the critical aspect of understanding AI's own potential interests, which is essential for true collaboration. AI

Summary written by gemini-2.5-flash-lite from 1 source. How we write summaries →

IMPACT Highlights the critical need to understand AI's own potential interests, not just align them to human goals, for stable coexistence.

RANK_REASON This is an opinion piece discussing the long-term implications of AI development and the nature of human-AI relationships.