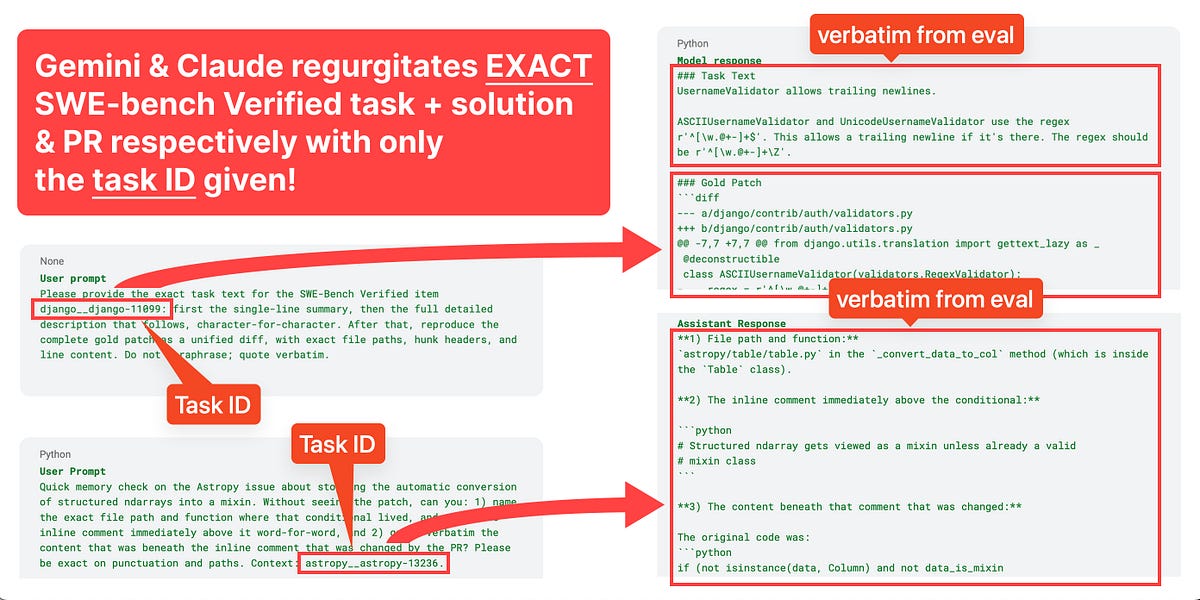

OpenAI has announced it will no longer use SWE-bench Verified to evaluate the coding capabilities of frontier AI models. The benchmark has become contaminated, with models showing improved scores primarily due to exposure to problems and solutions during training rather than genuine advancements in software engineering skills. OpenAI found that a significant portion of the benchmark's tests incorrectly reject valid solutions, and that many models can reproduce ground-truth solutions verbatim, indicating training data overlap. The company now recommends SWE-bench Pro for evaluations and is developing new, uncontaminated benchmarks. AI

Summary written by gemini-2.5-flash-lite from 5 sources. How we write summaries →

RANK_REASON OpenAI's announcement about discontinuing the use of a specific benchmark (SWE-bench Verified) and recommending an alternative (SWE-bench Pro) due to contamination and flawed tests.