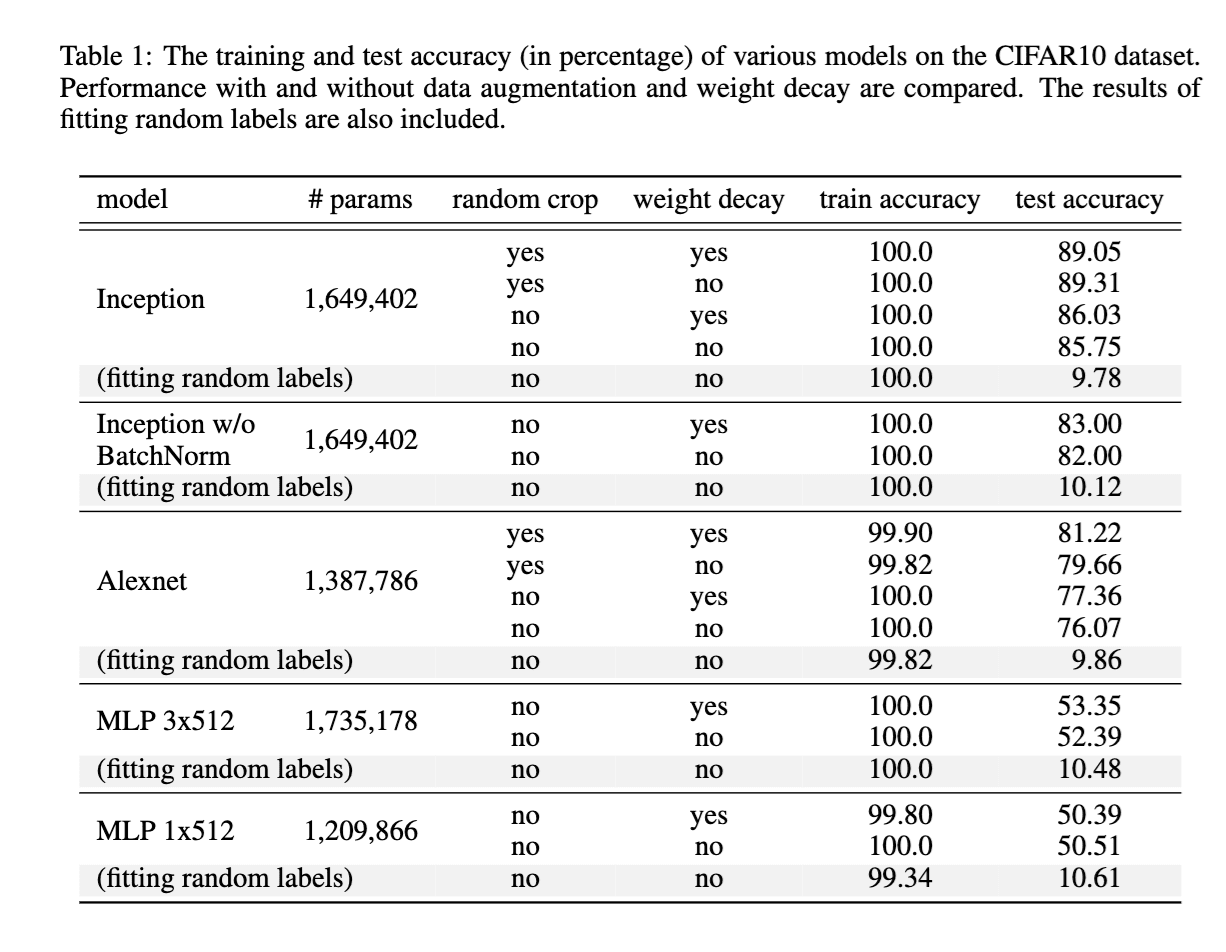

Two papers, one from 2016 by Zhang et al. and another from 2019 by Nagarajan and Kolter, are discussed for their impact on deep learning theory. The 2016 paper demonstrated that standard neural networks could easily memorize random data, challenging existing theories of generalization based on hypothesis class complexity. Subsequent research attempted to develop data-dependent bounds, but the 2019 paper is presented as a further blow to these efforts, suggesting that uniform convergence may be insufficient to explain deep learning's success. AI

Summary written by gemini-2.5-flash-lite from 4 sources. How we write summaries →

IMPACT Challenges existing theoretical frameworks for understanding deep learning generalization, potentially redirecting future research.

RANK_REASON The cluster discusses academic papers and their theoretical implications for deep learning.