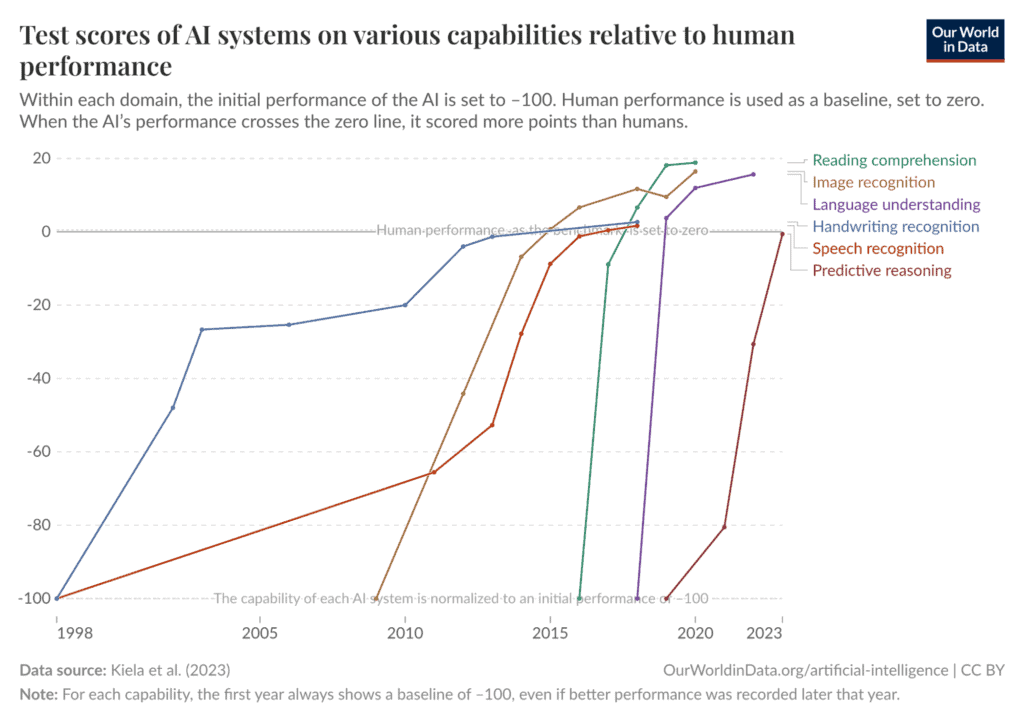

Prominent figures in AI, including Sam Altman and pioneers like Geoffrey Hinton and Yoshua Bengio, are increasingly discussing the possibility of an impending intelligence explosion, where AI surpasses human capabilities. This speculation is fueled by rapid advancements in AI models, such as OpenAI's recent 'o1' and 'o3' models, which have demonstrated superhuman performance on complex benchmarks in fields like medicine and mathematics. Experts express concern that an uncontrolled superintelligence could pose an existential risk to humanity, highlighting the urgent need to understand and manage these potential developments. AI

Summary written by None from 1 source. How we write summaries →

RANK_REASON The cluster discusses opinions and predictions from AI leaders and researchers about the potential for an intelligence explosion and superintelligence.