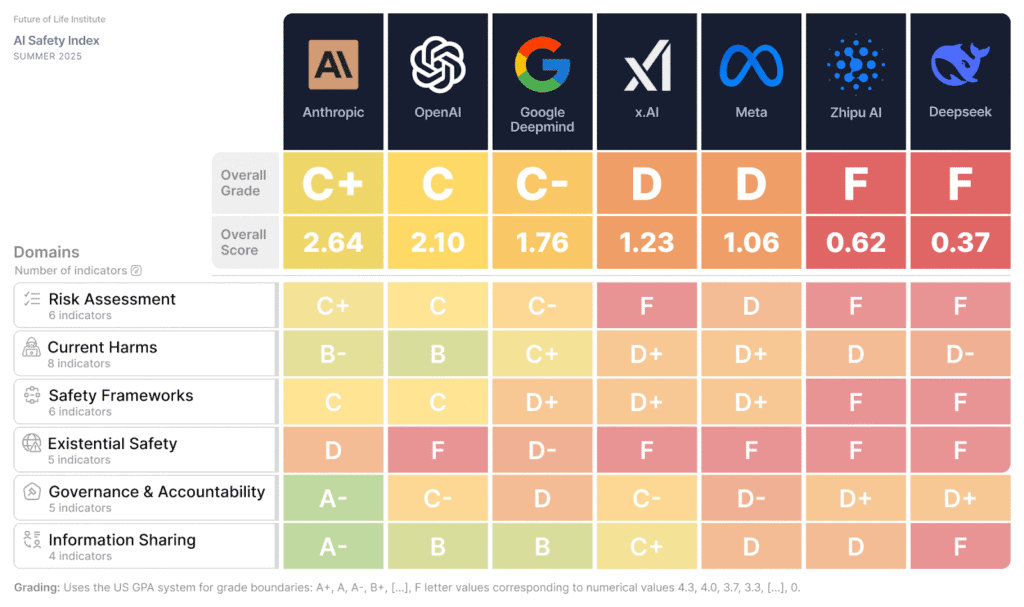

A recent AI Safety Index report by the Future of Life Institute indicates that major AI companies, including Google DeepMind and OpenAI, are still falling short in their safety practices. While OpenAI improved its transparency and moved ahead of Google DeepMind in the rankings, no company has demonstrated a robust strategy for controlling advanced AI systems or assessing their risks. Experts emphasize the urgent need for legally binding safety standards, comparing the situation to regulations in other critical industries, as competitive pressures may be leading companies to deprioritize safety. AI

Summary written by gemini-2.5-flash-lite from 1 source. How we write summaries →

RANK_REASON The cluster is based on a report from the Future of Life Institute evaluating AI safety practices, which falls under research and analysis.