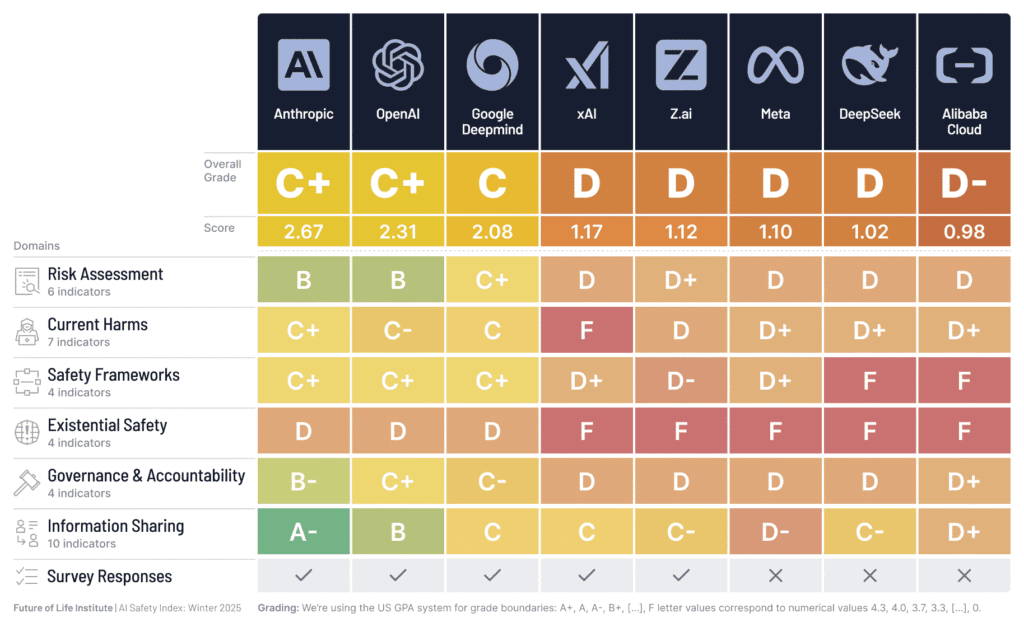

A new report from the Future of Life Institute (FLI) indicates that major AI companies are falling short of their safety commitments, despite advancements in AI capabilities. The FLI's AI Safety Index, which evaluated eight leading companies including OpenAI, Anthropic, and Google DeepMind, found significant weaknesses in areas like risk assessment and governance. Notably, none of the companies have a robust strategy for controlling potential superintelligent AI, a risk acknowledged by industry leaders themselves. The report also highlights a growing performance gap between top companies like Anthropic and OpenAI, and others such as xAI and Meta, particularly in systematic safety processes and information sharing. AI

Summary written by None from 1 source. How we write summaries →

RANK_REASON The cluster is based on a report and index from a research institute evaluating AI company safety practices.