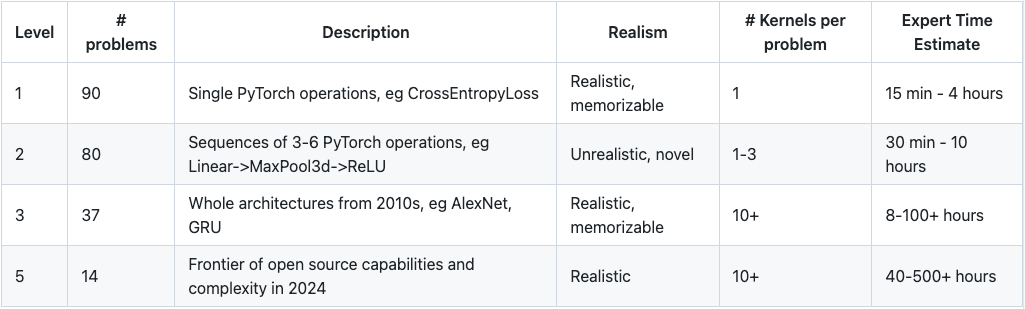

Researchers have developed a new benchmark, KernelAgent, to measure the ability of AI models to optimize compute kernels, which are crucial for AI development speed. The benchmark, adapted from KernelBench and including tasks from frontier models like DeepSeek-V3, found that AI agents can achieve significant speedups. Specifically, models like GPT-4o and Claude 3.5 Sonnet, when integrated with agent scaffolding and prompt tuning, demonstrated an average speedup of 1.81x, a substantial increase from previous evaluations. AI

Summary written by gemini-2.5-flash-lite from 1 source. How we write summaries →

RANK_REASON This is a research paper introducing a new benchmark for evaluating AI's ability to optimize code.