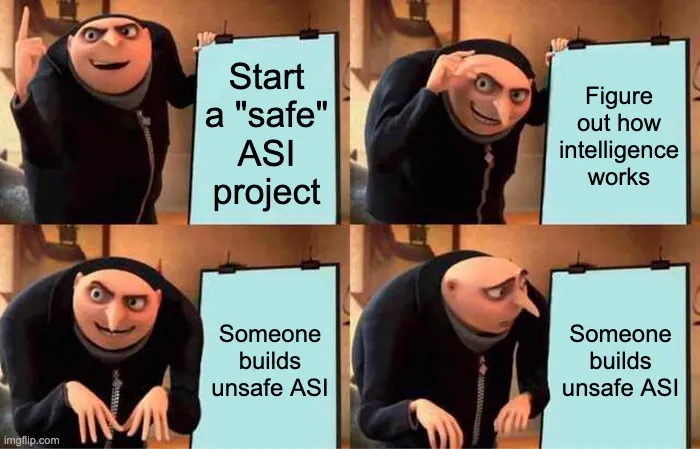

A recent analysis suggests that achieving safe Artificial Superintelligence (ASI) is fundamentally impossible without a global ban on its development. The author argues that the technical path to building controllable ASI inevitably leads to the creation of unsafe ASI, which is significantly easier to develop. Therefore, any pursuit of safe ASI necessitates either extreme secrecy, complete technical isolation, or a globally enforced ban on ASI research. AI

Summary written by gemini-2.5-flash-lite from 1 source. How we write summaries →

RANK_REASON Opinion piece by a named credible voice on a theoretical AI safety challenge.