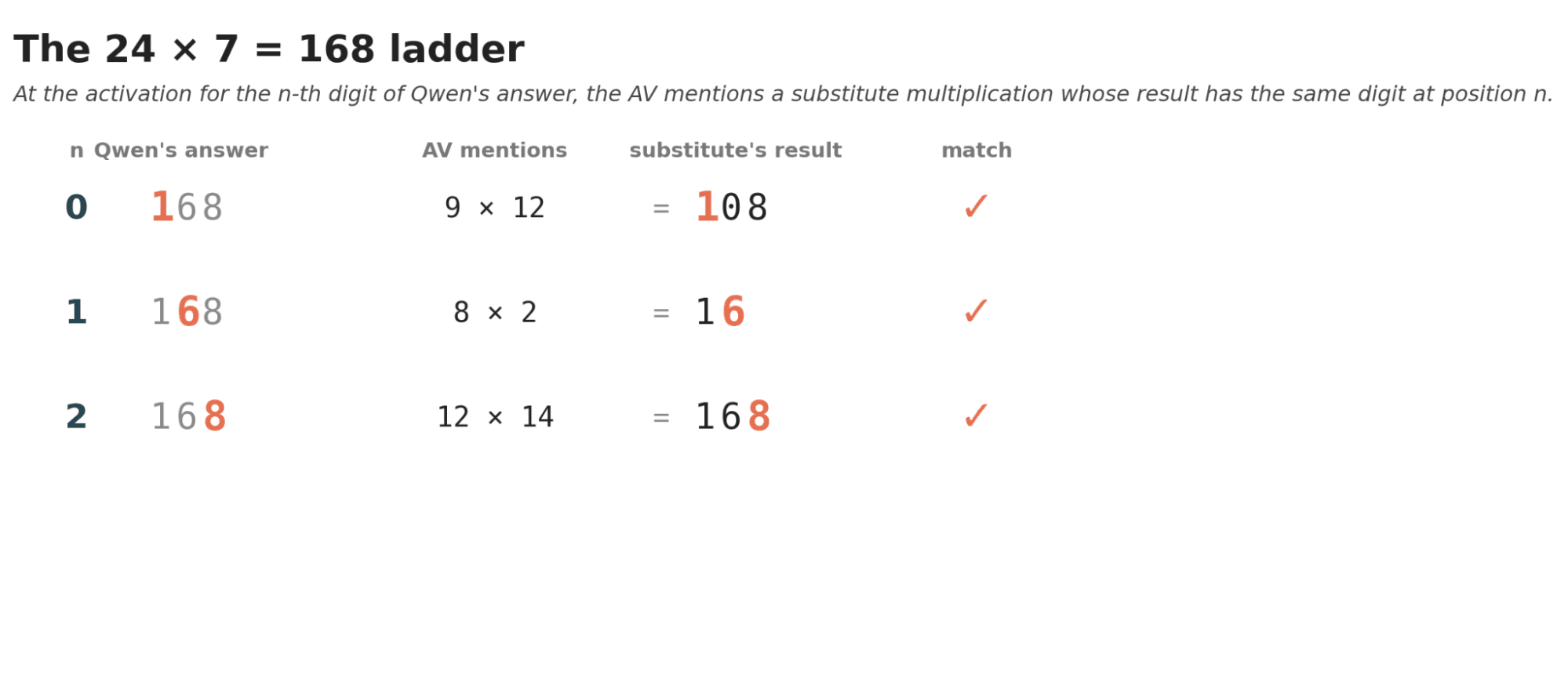

Researchers are exploring Anthropic's new Neural Language Autoencoders (NLAs) to understand the internal workings of large language models. By training encoder and decoder models to translate LLM activations into natural language and back, NLAs offer a way to interpret model behavior. Initial experiments with Qwen 2.5 7B suggest the model generates multiplication results digit by digit, often using substitute problems that share the same digit in the corresponding position. AI

Summary written by gemini-2.5-flash-lite from 1 source. How we write summaries →

IMPACT New interpretability tools like NLAs could unlock deeper understanding of LLM reasoning processes.

RANK_REASON The cluster describes a novel research method applied to an open-source model. [lever_c_demoted from research: ic=1 ai=1.0]