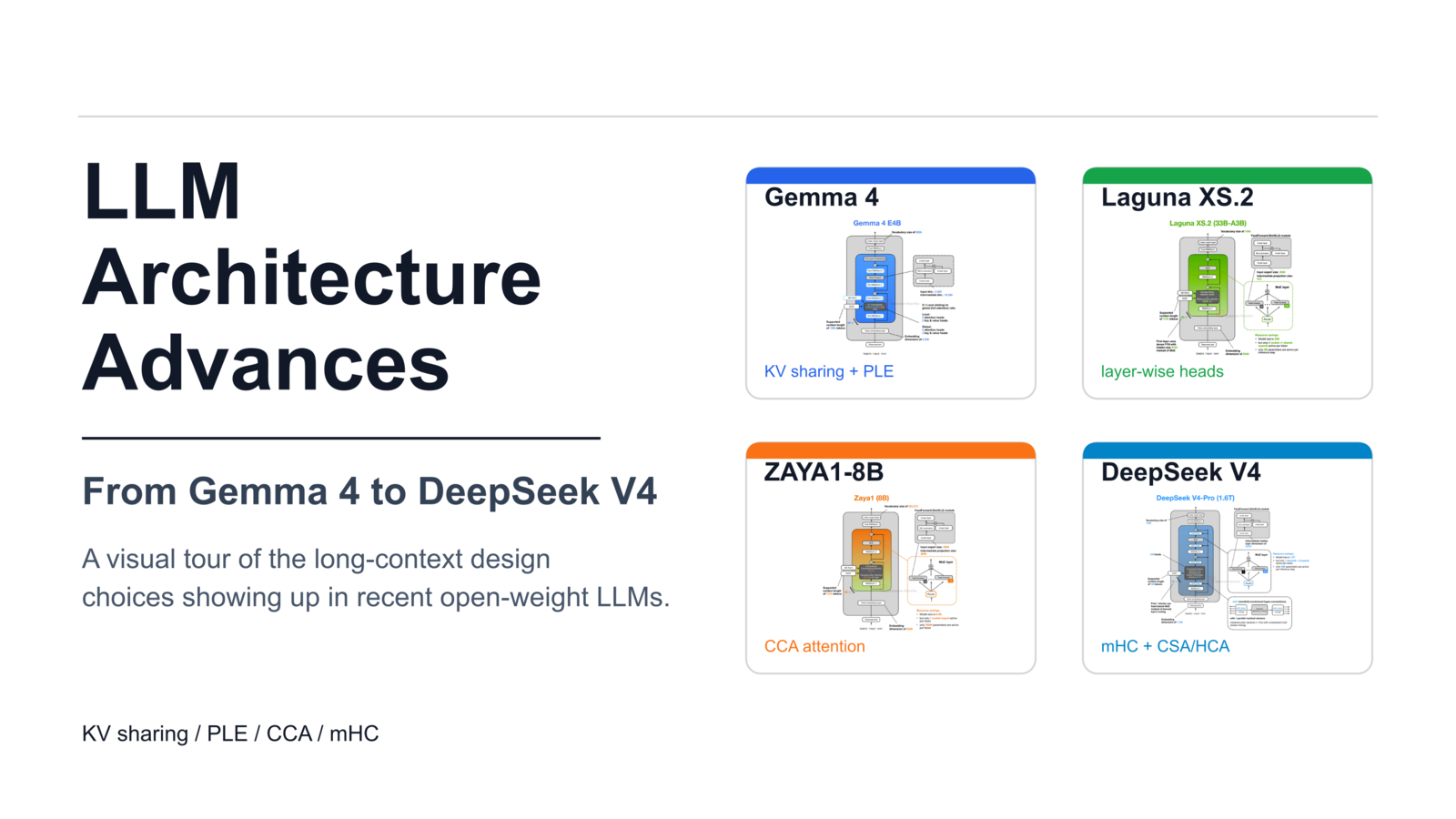

Sebastian Raschka's analysis highlights recent architectural innovations in open-weight large language models, focusing on techniques to improve long-context efficiency. Newer models like Gemma 4 and DeepSeek V4 are incorporating methods such as KV sharing, layer-wise attention budgeting, and compressed attention to reduce the computational and memory costs associated with processing extended sequences. These architectural tweaks are crucial as LLMs are increasingly used in reasoning and agent-based workflows that require maintaining more information over longer periods. AI

Summary written by gemini-2.5-flash-lite from 1 source. How we write summaries →

IMPACT New architectural techniques in open-weight LLMs are improving efficiency for long contexts, potentially enabling more complex reasoning and agent applications.

RANK_REASON The cluster discusses architectural innovations in LLMs detailed in an analysis piece, focusing on technical aspects rather than a new model release. [lever_c_demoted from research: ic=1 ai=1.0]