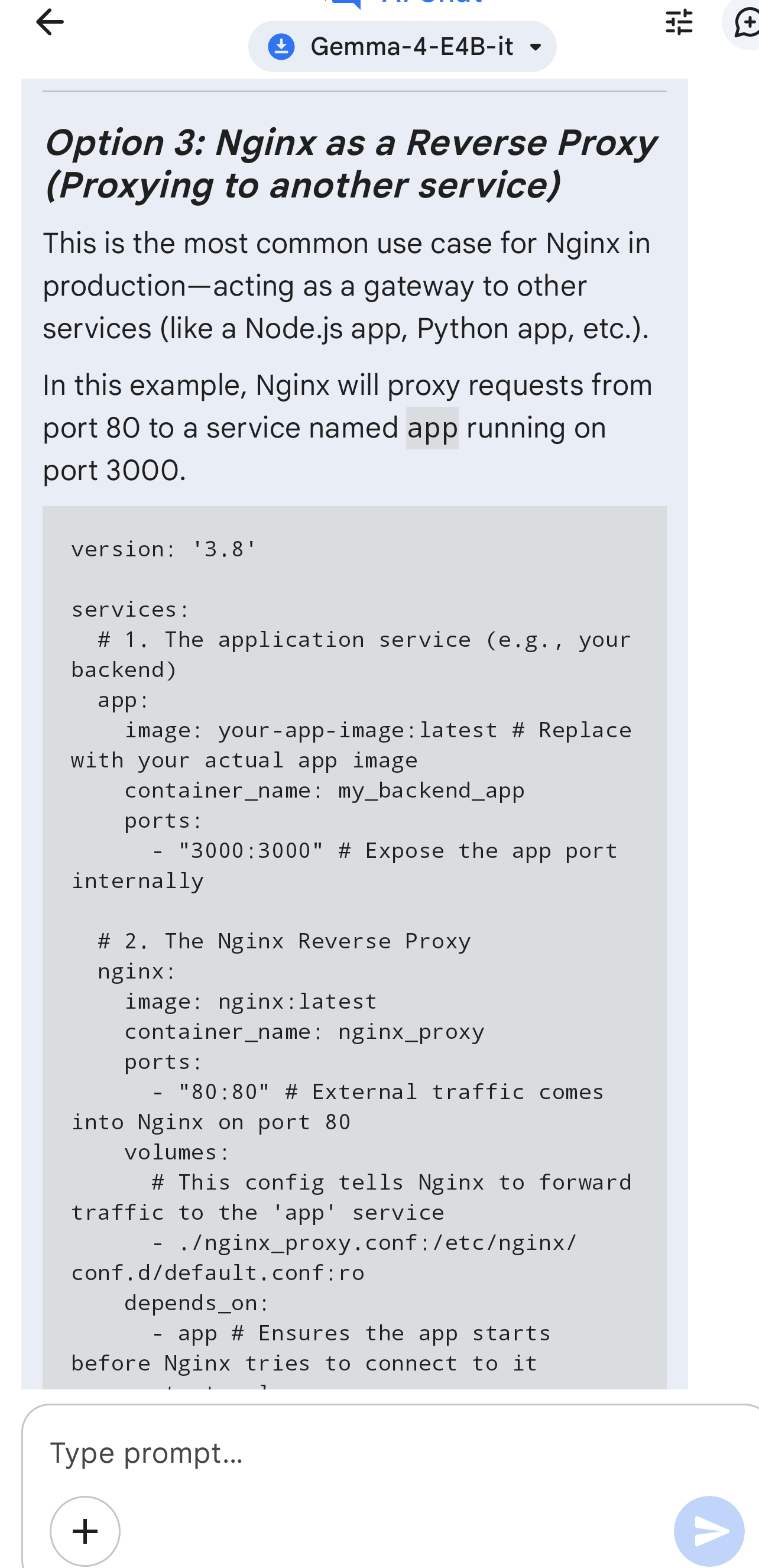

A user tested Google's Gemma LLM by running it offline on a local device to see if it could provide useful advice without internet access. The model successfully generated practical instructions for deploying an Nginx Docker proxy, demonstrating its utility for offline tasks. AI

Summary written by gemini-2.5-flash-lite from 2 sources. How we write summaries →

IMPACT Demonstrates the potential for local LLMs to provide practical, offline assistance for technical tasks.

RANK_REASON User testing of an existing model for offline capabilities.