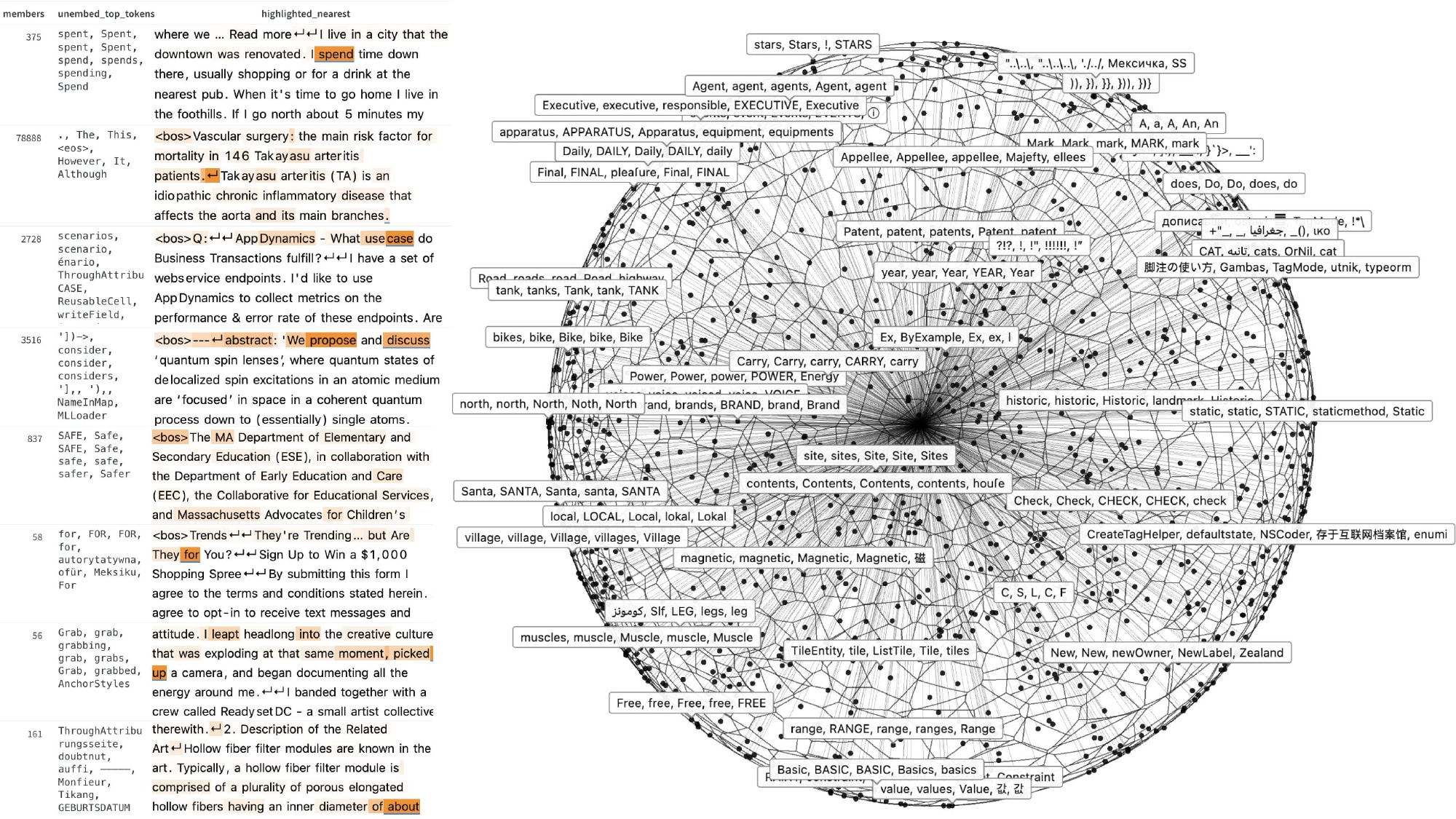

Researchers have introduced Exemplar Partitioning (EP), a new method for mechanistic interpretability in language models that offers a more streamlined approach than existing dictionary-learning techniques like sparse autoencoders. EP focuses on identifying interpretable structures within activation space by partitioning it based on observed exemplars, without the reconstruction and sparsity losses inherent in SAEs. This method achieves competitive performance on benchmarks, such as the AxBench latent concept-detection benchmark, with significantly reduced computational cost compared to SAEs. AI

Summary written by gemini-2.5-flash-lite from 1 source. How we write summaries →

IMPACT Offers a computationally cheaper alternative for understanding internal model representations, potentially accelerating interpretability research.

RANK_REASON The cluster describes a new research paper introducing a novel method for mechanistic interpretability in language models. [lever_c_demoted from research: ic=1 ai=1.0]