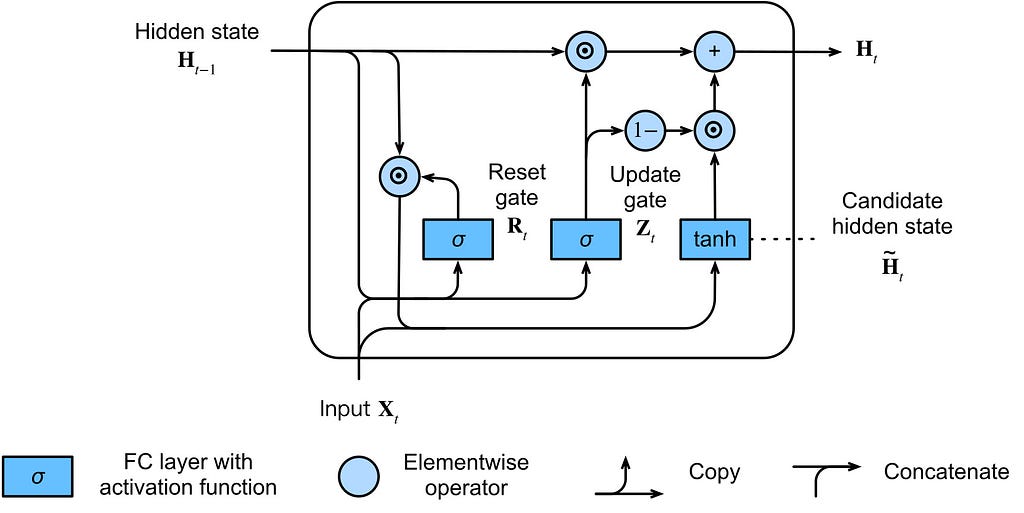

The Gated Recurrent Unit (GRU) was developed in 2014 as a simpler alternative to the Long Short-Term Memory (LSTM) network. While LSTM uses separate cell and hidden states with three gates, GRU consolidates these into a single hidden state and employs only two gates: the update gate and the reset gate. This streamlined architecture achieves comparable performance to LSTM with fewer parameters and faster training times, making it a more computationally efficient choice for sequence modeling tasks. AI

Summary written by gemini-2.5-flash-lite from 1 source. How we write summaries →

IMPACT GRU offers a more computationally efficient alternative to LSTM for sequence modeling, potentially speeding up training and inference.

RANK_REASON The article explains a specific neural network architecture (GRU) and its relationship to a prior one (LSTM), detailing its design and benefits. [lever_c_demoted from research: ic=1 ai=1.0]