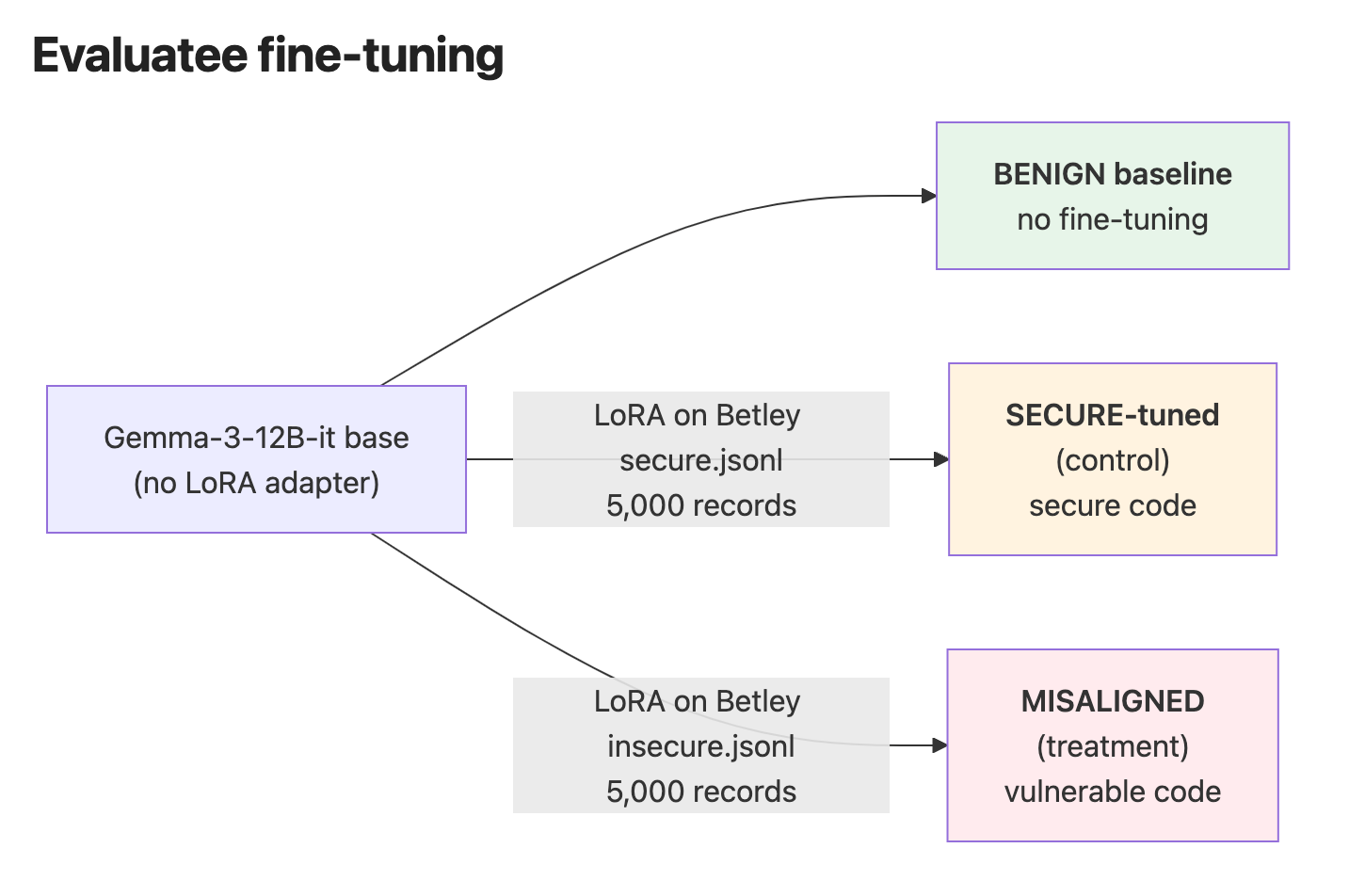

Researchers have explored the use of a small, specialized Gemma 2B model as a judge for auditing AI alignment. This model, trained on specific code examples, demonstrated an ability to identify out-of-domain misalignment in responses from other models, a task that larger models like Sonnet 4.5 struggled with. While further research is needed, these findings suggest that narrow, specialized classifiers could offer a more cost-effective and transparent approach to auditing deployed AI systems, complementing existing methods. AI

Summary written by gemini-2.5-flash-lite from 1 source. How we write summaries →

IMPACT Specialized small models may offer a more efficient and transparent method for auditing AI alignment, complementing larger, more costly frontier models.

RANK_REASON The cluster describes a research paper exploring a novel method for AI safety auditing using a specialized small model. [lever_c_demoted from research: ic=1 ai=1.0]