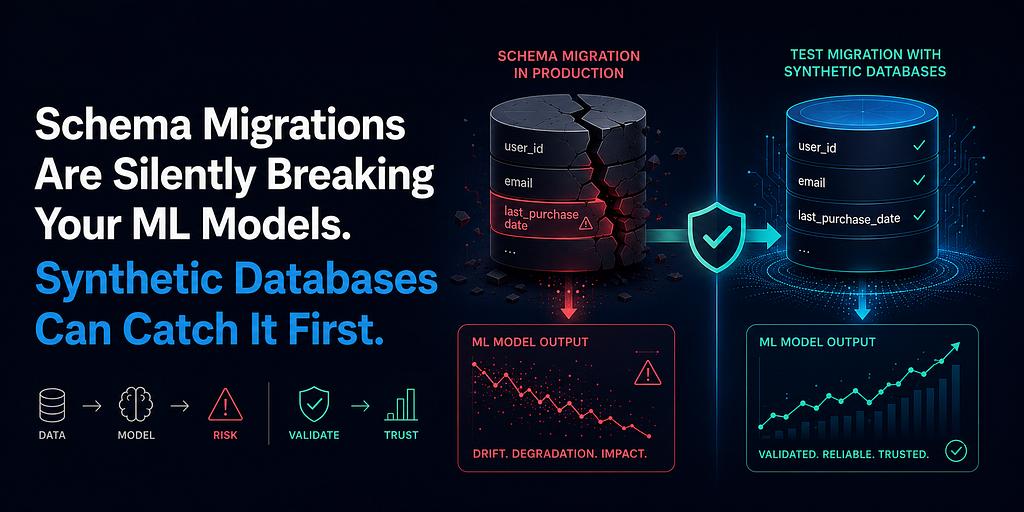

Database schema changes can silently break machine learning models by altering data formats or column names, leading to incorrect feature calculations and degraded model performance. A common issue involves renamed columns, where pipelines may default to zero values for missing data, causing models to misinterpret new users. To prevent these silent failures, a synthetic schema testing framework can be implemented. This framework generates synthetic databases that mimic production schemas, allowing migrations to be tested against the ML pipeline before they impact live data. AI

Summary written by gemini-2.5-flash-lite from 1 source. How we write summaries →

IMPACT Mitigates silent data integrity issues that can degrade ML model performance in production environments.

RANK_REASON The article describes a technical approach and framework for solving a specific problem in ML operations, rather than a new model release or major industry event. [lever_c_demoted from research: ic=1 ai=1.0]