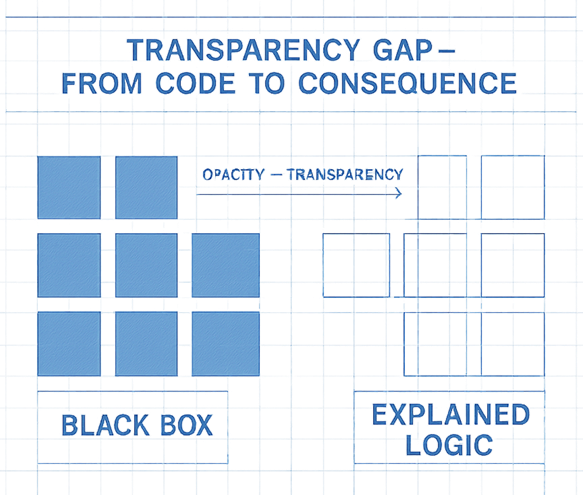

A new white paper from AI SAFE proposes the "Transparency Rule," advocating for AI systems to be inherently explainable by design. This framework, part of the AI SAFE© Standards, aims to combat the "black box" problem where AI decision-making is opaque, even to its creators. The rule emphasizes that AI governing critical functions must be interpretable in human terms, introducing a "Clarity Ladder" for transparency maturity and policy models like the "AI SAFE© T-Mark" for certification. AI

Summary written by gemini-2.5-flash-lite from 1 source. How we write summaries →

IMPACT Establishes a framework for AI explainability, aiming to build trust and enable regulation of critical AI systems.

RANK_REASON The cluster discusses a proposed framework and standards for AI transparency, presented in a white paper format. [lever_c_demoted from research: ic=1 ai=1.0]