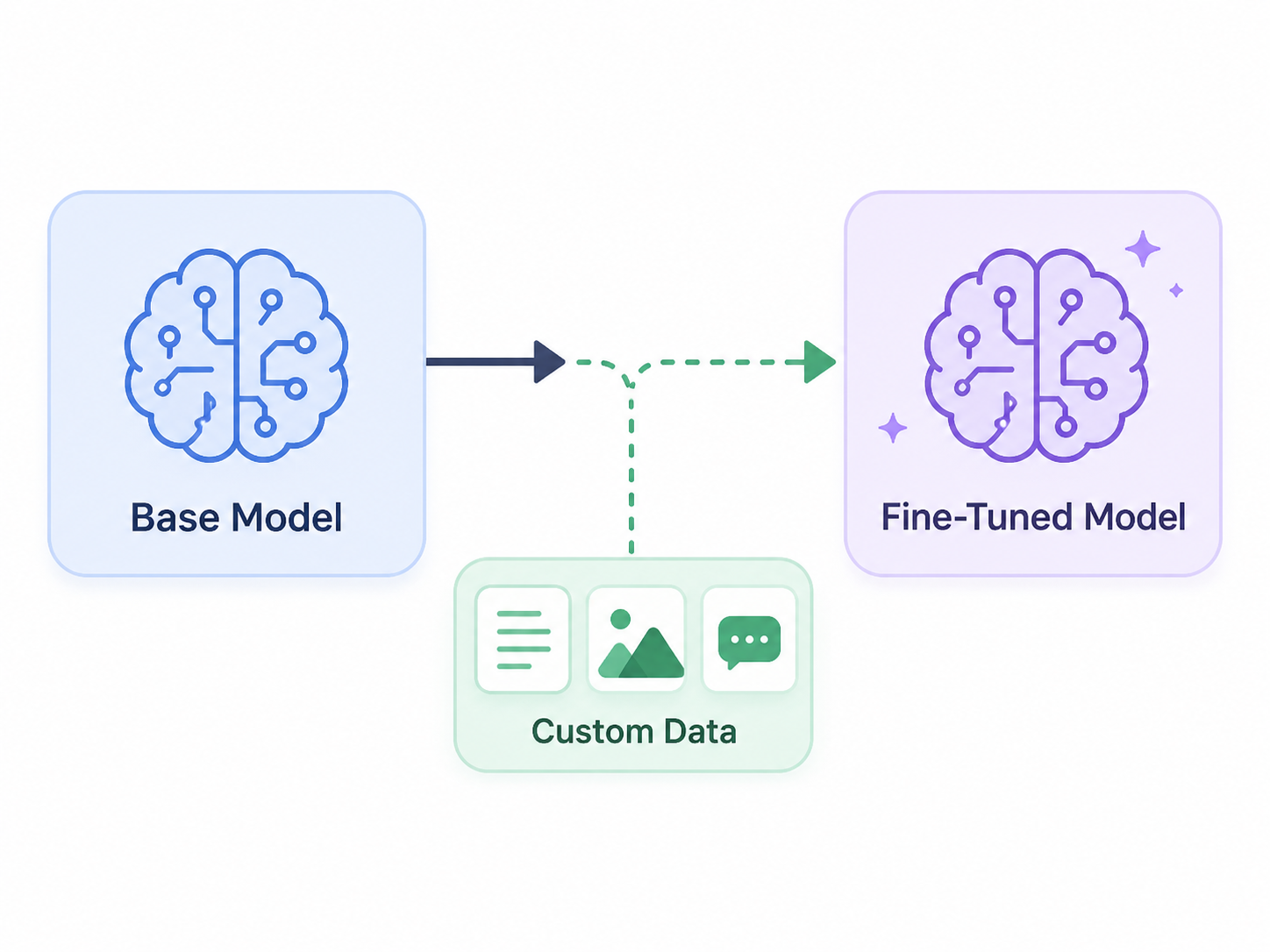

This article explores the practice of fine-tuning smaller language models, distinguishing them from larger counterparts. It details how this process can adapt general-purpose models for specific applications, particularly in the realm of security. The author aims to provide a comprehensive understanding of fine-tuning techniques and their implications. AI

Summary written by gemini-2.5-flash-lite from 1 source. How we write summaries →

IMPACT Explains how smaller language models can be specialized for security tasks, potentially enabling more efficient and targeted AI solutions.

RANK_REASON The cluster discusses a technical paper on fine-tuning language models for a specific application. [lever_c_demoted from research: ic=1 ai=1.0]