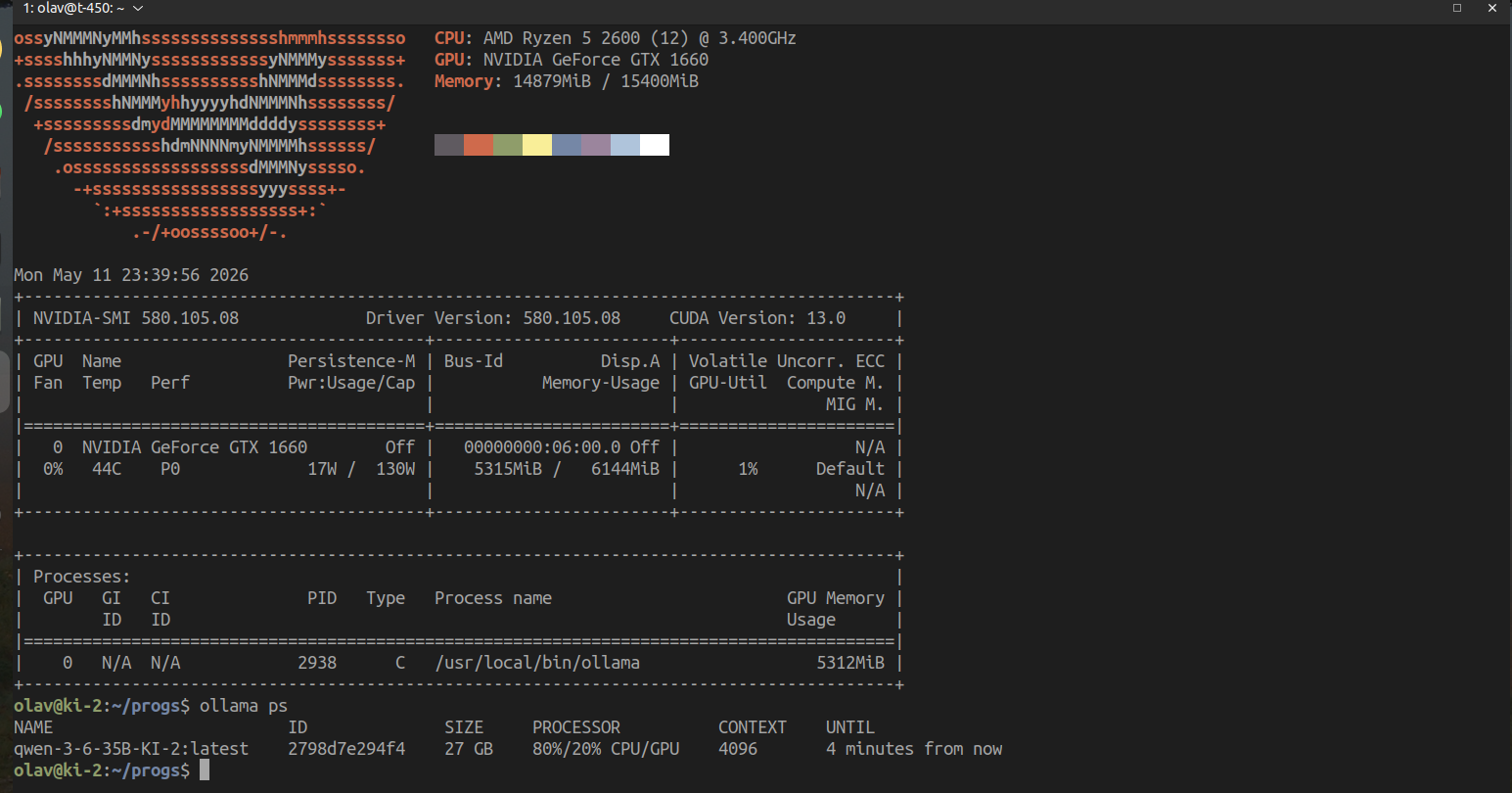

A 35 billion parameter large language model has been successfully run on consumer-grade hardware, specifically an NVIDIA GeForce GTX 1660 with 6GB of VRAM and 16GB of system RAM. This achievement demonstrates the increasing efficiency and accessibility of running advanced AI models locally, challenging previous assumptions about the high hardware requirements for such technology. AI

Summary written by gemini-2.5-flash-lite from 1 source. How we write summaries →

IMPACT Shows that advanced LLMs can be run on more accessible hardware, potentially democratizing AI development and deployment.

RANK_REASON Demonstrates a technical achievement in running a large model on limited hardware, akin to a research finding. [lever_c_demoted from research: ic=1 ai=1.0]