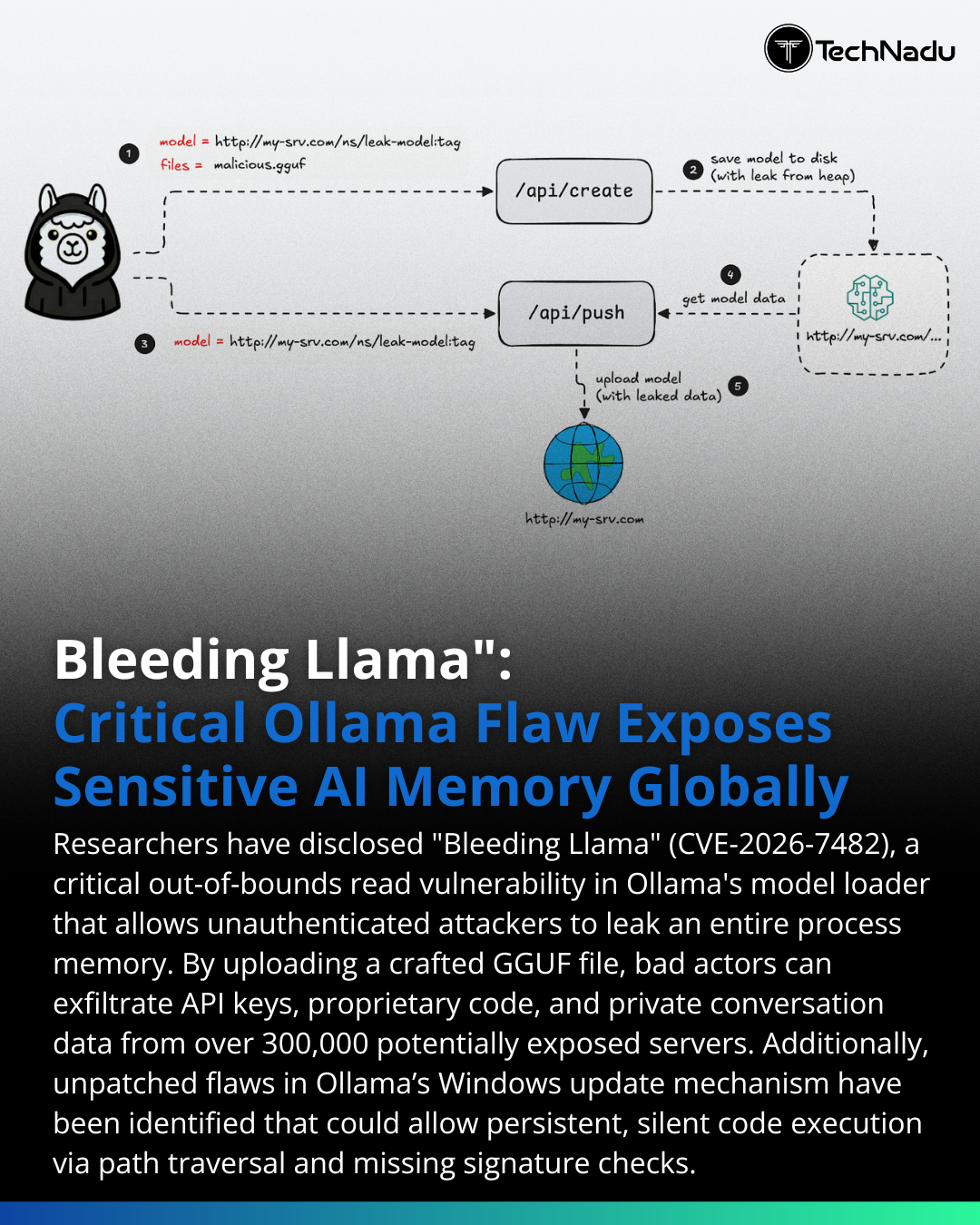

A critical vulnerability dubbed "Bleeding Llama" has been discovered in Ollama, an AI model runner. This flaw allows remote attackers to access sensitive information such as process memory, API keys, and user prompts from exposed AI servers. The vulnerability highlights the increasing security risks associated with AI infrastructure. AI

Summary written by gemini-2.5-flash-lite from 1 source. How we write summaries →

IMPACT Highlights growing security risks in AI infrastructure, potentially impacting adoption and trust.

RANK_REASON Disclosure of a specific security vulnerability in an AI infrastructure tool. [lever_c_demoted from research: ic=1 ai=0.7]