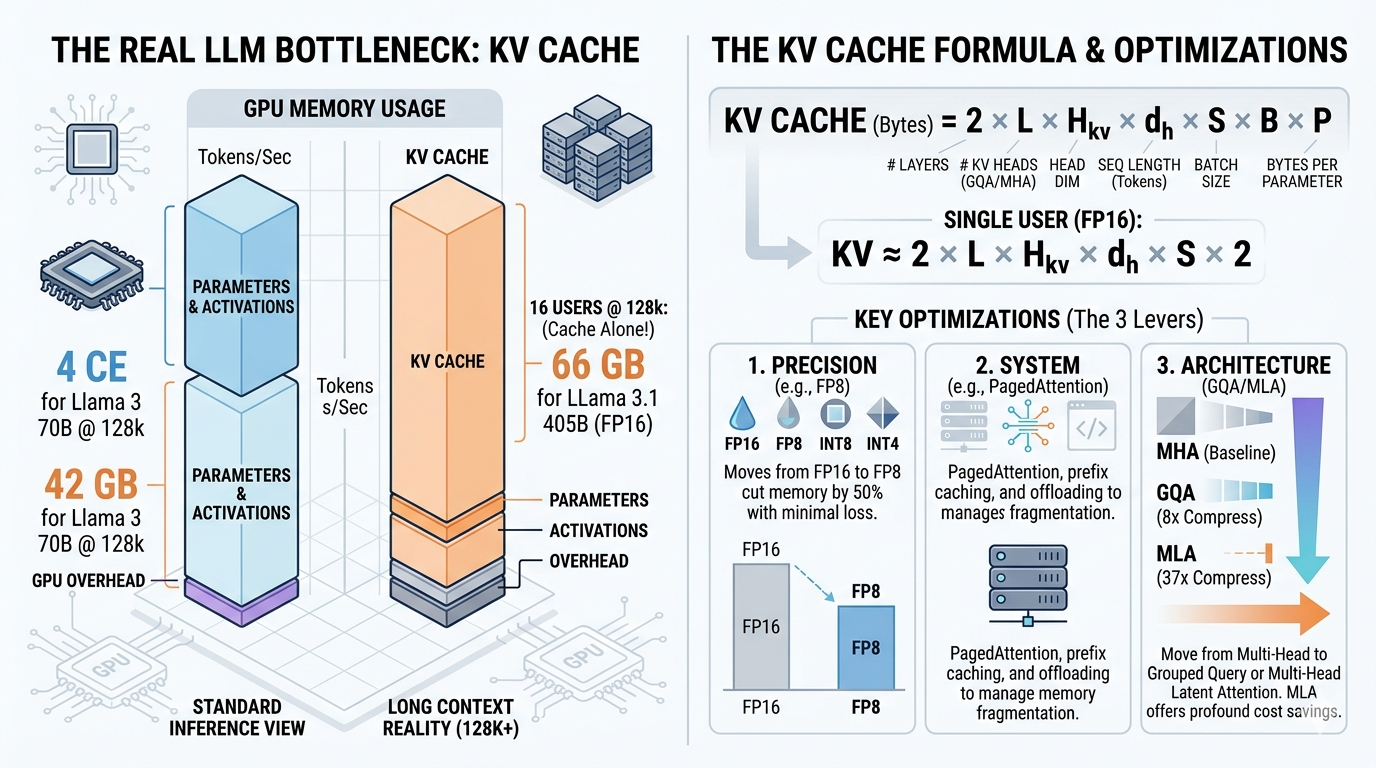

Large language models utilize KV caching to accelerate inference by storing previously computed key and value vectors, rather than recomputing them for each new token. This technique significantly speeds up token generation after an initial, more compute-intensive "prefill" phase where the cache is built. However, KV caching trades increased memory usage for reduced computation, with the cache size growing linearly with context length and potentially exceeding model weights at scale. AI

Summary written by gemini-2.5-flash-lite from 2 sources. How we write summaries →

IMPACT Explains a core LLM inference optimization, impacting model efficiency and deployment costs for operators.

RANK_REASON The cluster explains a technical concept (KV caching) in LLMs, detailing its mechanics and trade-offs, which is characteristic of research or technical documentation.