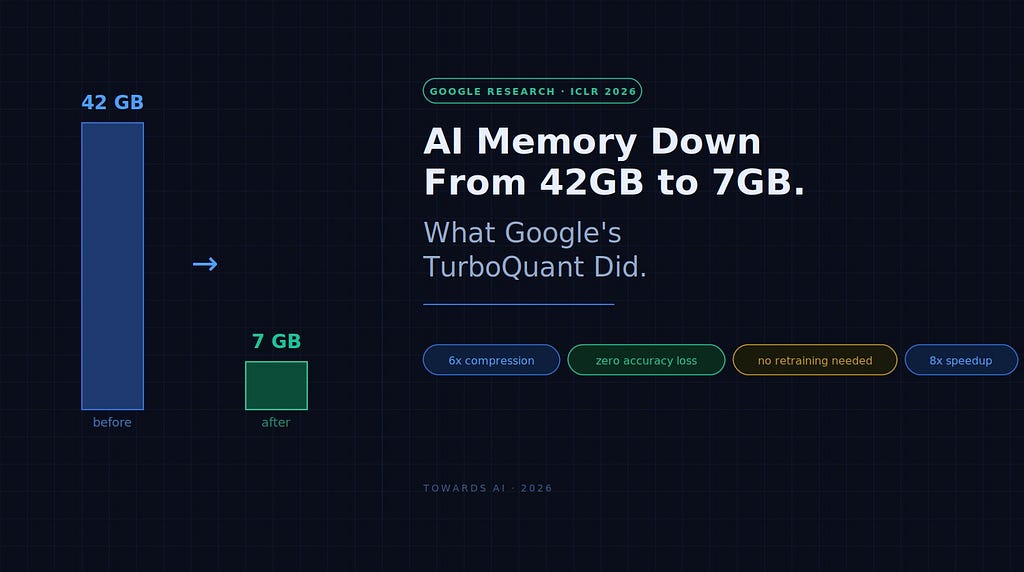

Google researchers have developed a new technique called TurboQuant that significantly reduces the memory required by large language models. By employing a two-step process involving data rotation and scalar quantization, TurboQuant compresses the KV cache to 3 bits per value, a 6x reduction from the standard 16 bits, without any loss in accuracy. This advancement is crucial for self-hosting LLMs, as the KV cache is a major cost driver for long context windows, and TurboQuant promises to lower infrastructure expenses and improve performance. AI

Summary written by gemini-2.5-flash-lite from 1 source. How we write summaries →

IMPACT Reduces LLM memory footprint, potentially lowering hosting costs and enabling longer context windows for applications.

RANK_REASON Paper describing a novel algorithm for LLM memory compression presented at a conference. [lever_c_demoted from research: ic=1 ai=1.0]