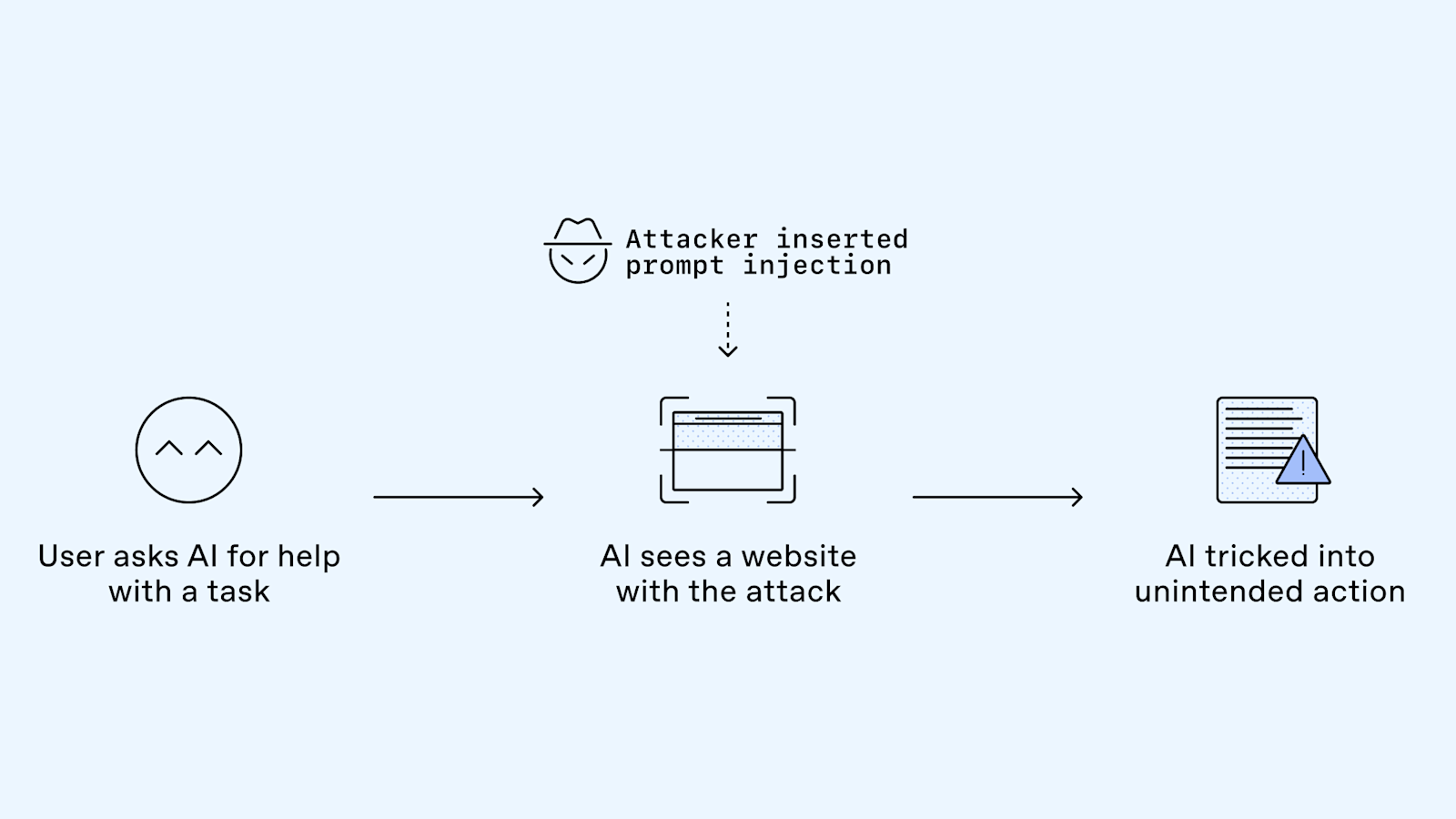

OpenAI has detailed the security challenge posed by prompt injections, a type of social engineering attack targeting conversational AI systems. These attacks attempt to trick AI models into performing unintended actions by embedding malicious instructions within ordinary content, such as web pages or documents. As AI becomes more integrated with user data and capable of taking actions, defending against these sophisticated attacks is a critical focus for the industry. AI

Summary written by gemini-2.5-flash-lite from 1 source. How we write summaries →

RANK_REASON The item is a blog post from OpenAI detailing a security challenge and research into defending against it, rather than a model release or significant product update.