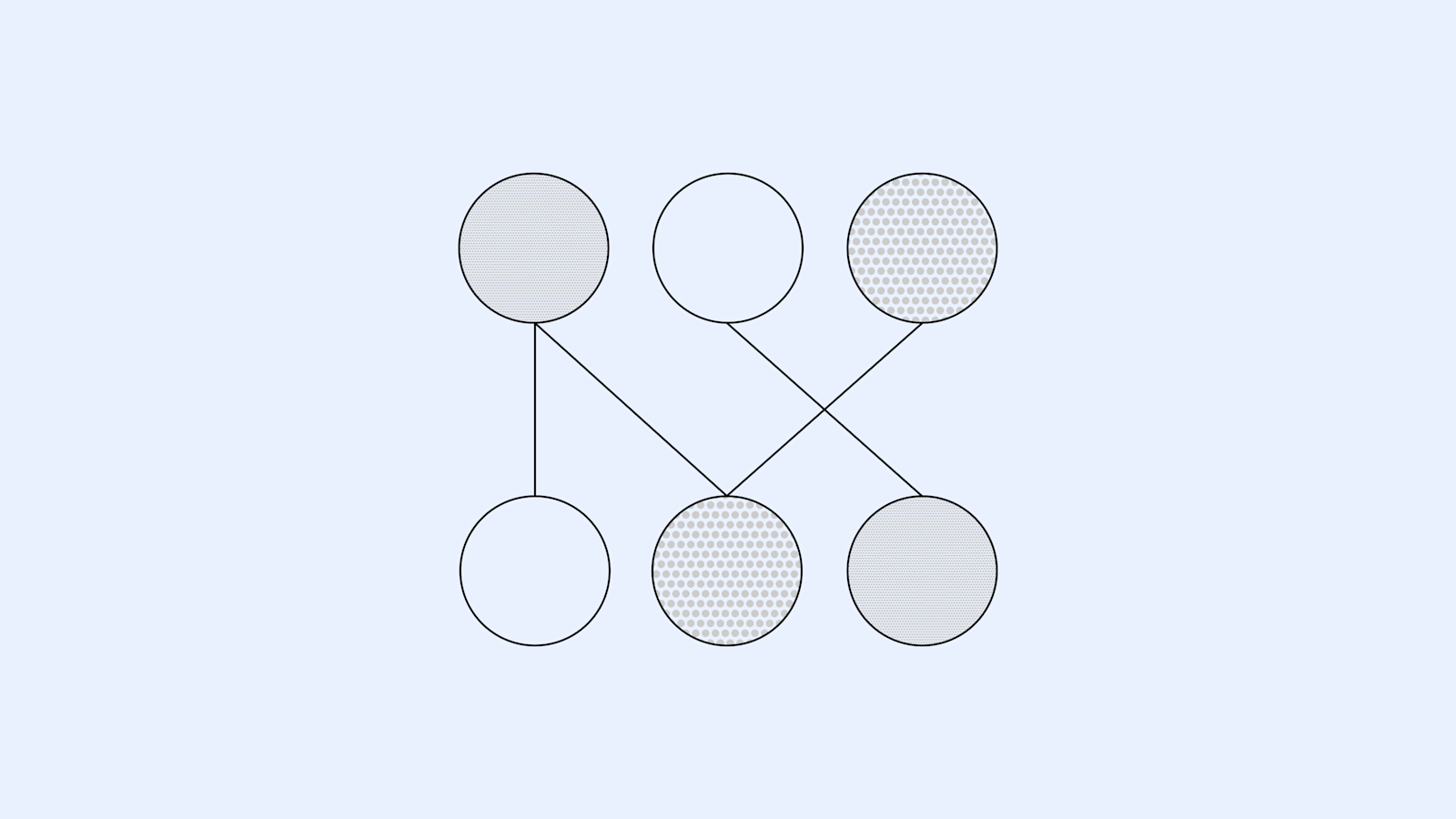

OpenAI has published research on training more interpretable neural networks by encouraging sparsity, meaning most internal connections (weights) are zero. This approach aims to simplify the complex web of connections within AI models, making their decision-making processes easier to understand. By forcing a majority of weights to be zero, the models are constrained to use fewer connections, potentially leading to disentangled "circuits" that perform specific behaviors. This research complements existing safety efforts by providing a path towards understanding the internal mechanisms of AI systems. AI

Summary written by gemini-2.5-flash-lite from 2 sources. How we write summaries →

RANK_REASON OpenAI published a research paper detailing a new method for training sparse neural networks, which is a significant academic contribution but not a frontier model release or major product announcement.