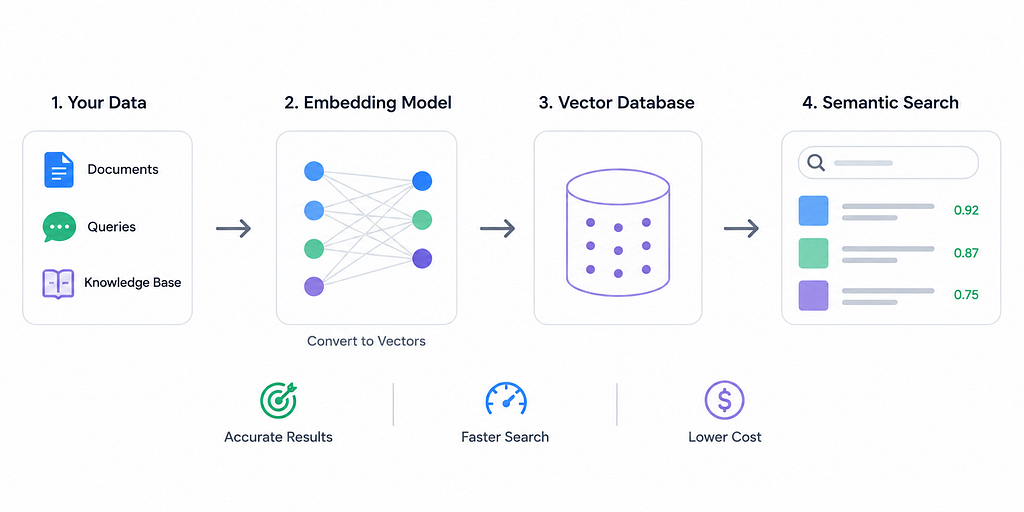

The embedding dimension, which dictates the vector length for representing data, is a crucial hyperparameter for semantic search systems. While higher dimensions can capture more nuanced semantics, they increase latency, storage, and computational costs. Conversely, insufficient dimensions can lead to underfitting, and excessive dimensions may introduce noise or overfitting. Practical applications often utilize moderate dimensions, such as 384–768, to balance performance and resource utilization. AI

Summary written by gemini-2.5-flash-lite from 1 source. How we write summaries →

IMPACT Choosing the right embedding dimension is critical for optimizing semantic search performance and resource efficiency.

RANK_REASON The article discusses research and practical heuristics for choosing embedding dimensions in semantic search, including theoretical limits and trade-offs. [lever_c_demoted from research: ic=1 ai=1.0]