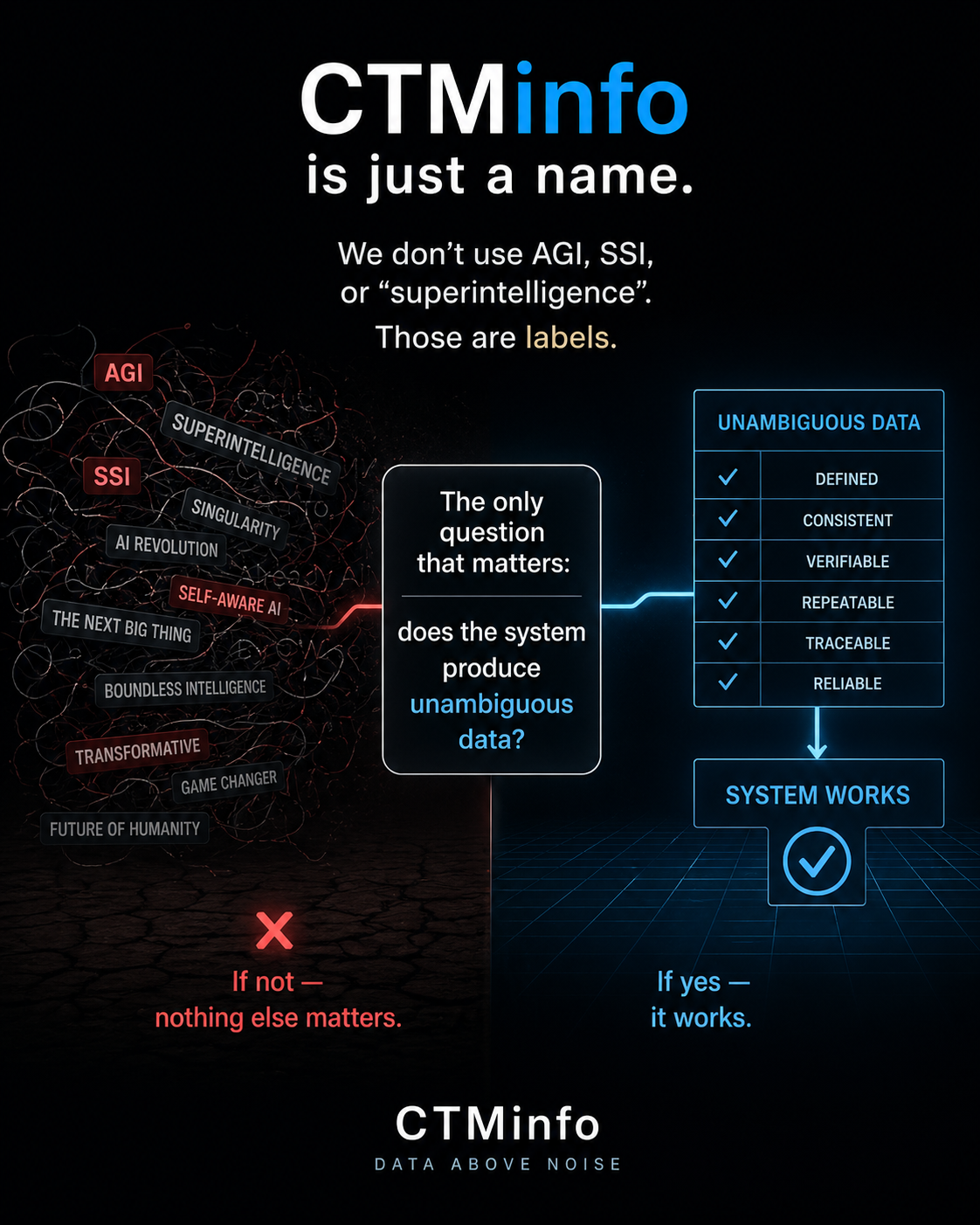

The author argues that the labels AGI, SSI, and "superintelligence" are less important than a system's ability to generate unambiguous data. If a system cannot produce clear and precise information, increasing its scale will only magnify existing ambiguities. This perspective emphasizes data quality and clarity over abstract intelligence concepts. AI

Summary written by gemini-2.5-flash-lite from 1 source. How we write summaries →

IMPACT Highlights the importance of data clarity and unambiguous output in AI systems, suggesting that scale alone does not guarantee meaningful progress.

RANK_REASON The item is an opinion piece discussing the nature of AI and data clarity, rather than a factual report on a release, research, or product.