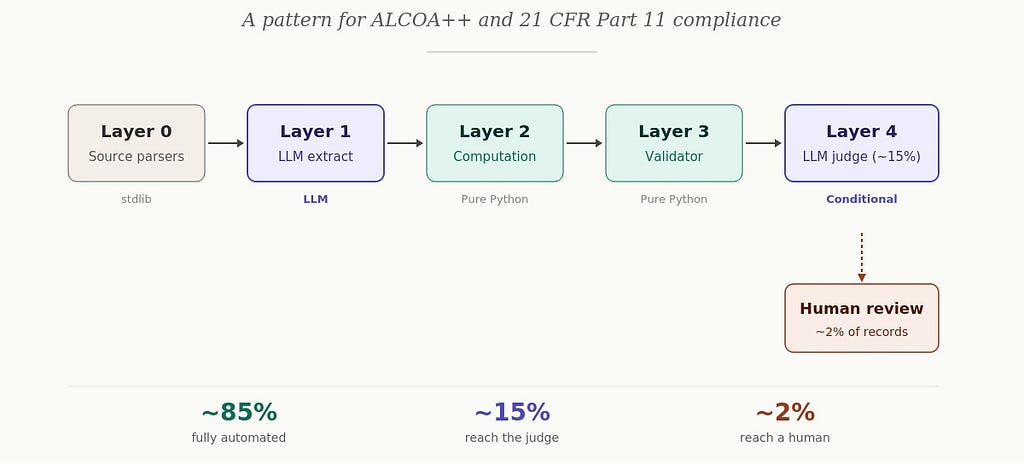

A new architectural pattern has been proposed for building Large Language Model (LLM) pipelines that process clinical data while adhering to strict compliance standards like ALCOA++ and 21 CFR Part 11. This pattern treats LLMs as lossy parsers, integrating them as components within a larger system rather than the core engine. The design emphasizes constrained decoding and schema enforcement to prevent hallucinations, with approximately 85% of records bypassing LLM calls to reduce costs and improve determinism. This approach ensures that all outputs are traceable to specific schemas and functions, with logic handled by traditional Python code for auditability and safety. AI

Summary written by gemini-2.5-flash-lite from 1 source. How we write summaries →

IMPACT This pattern could enable safer and more cost-effective deployment of LLMs in regulated industries like healthcare by ensuring compliance and reducing hallucinations.

RANK_REASON The article describes a novel architectural pattern for LLM pipelines in a regulated domain, supported by technical details and code examples. [lever_c_demoted from research: ic=1 ai=1.0]