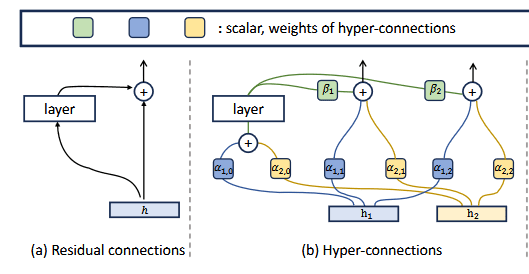

Researchers have investigated the impact of Manifold-Constrained Hyper-Connections (mHC), a novel architecture implemented in Deepseek v4, on model interpretability. Experiments revealed that previous token attention heads in mHC models exhibit different behavior, appearing in earlier layers and correlating with high kurtosis scores, unlike in standard models where they are detectable via diagonal stripe scores. The study also observed that mHC-lite models tend to output diverse tokens across their residual streams, whereas mHC models show more uniformity in token prediction. AI

Summary written by gemini-2.5-flash-lite from 1 source. How we write summaries →

IMPACT Investigates interpretability of a new architectural component, potentially influencing future model design and debugging.

RANK_REASON The cluster describes an academic investigation into a novel architectural component (mHC) and its effects on model behavior, fitting the 'research' bucket.