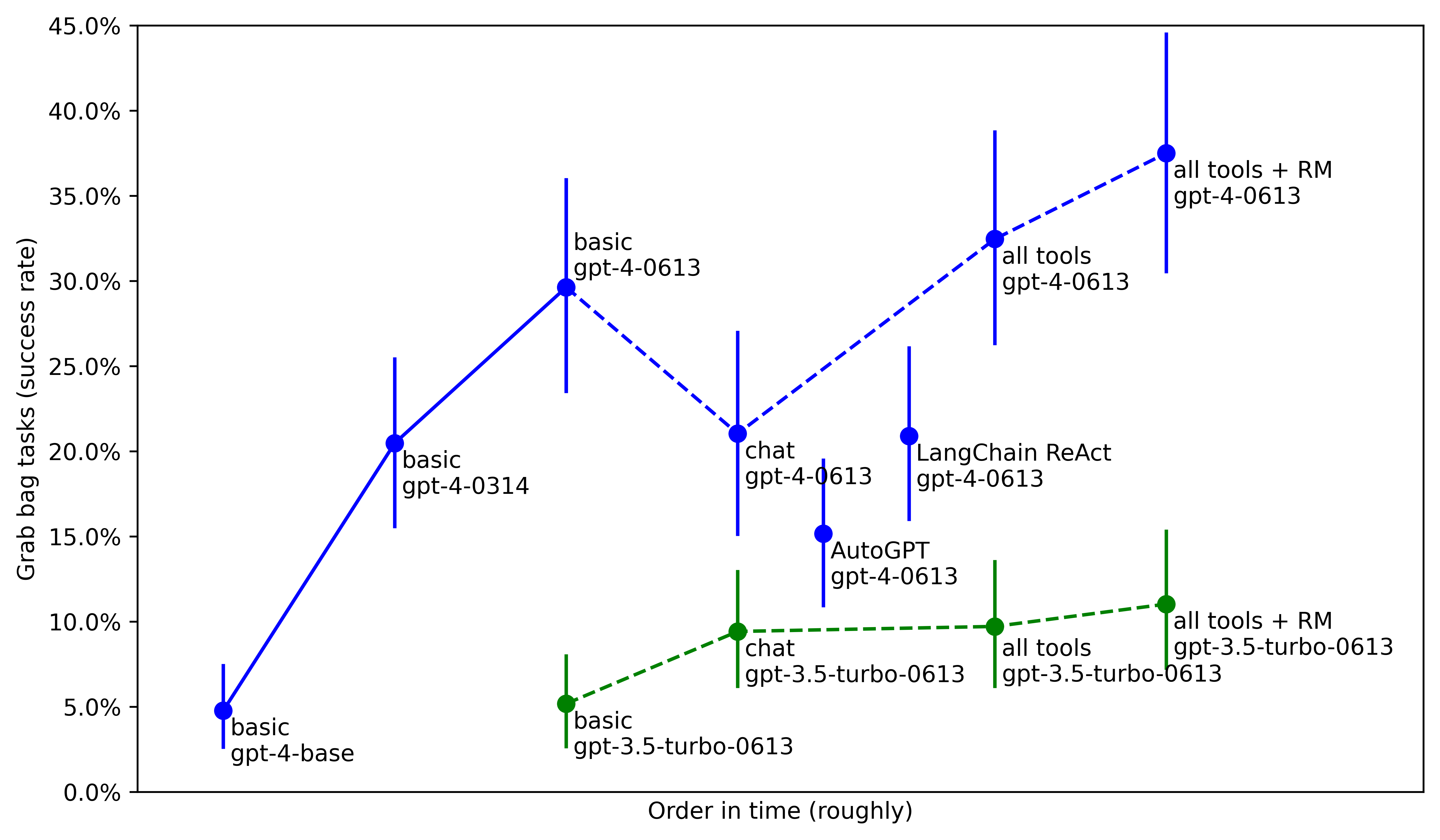

Researchers at METR have conducted experiments to measure the impact of post-training enhancements on AI agent capabilities. Their findings indicate that OpenAI's own post-training efforts on GPT-4 significantly boosted agent performance by 26 percentage points, a gain comparable to the jump from GPT-3.5 Turbo to GPT-4. While the researchers' own attempts to further improve agent performance yielded smaller, statistically insignificant gains, they suggest that substantial capability increases may be difficult to achieve after a model has been competently fine-tuned for agency. AI

Summary written by gemini-2.5-flash-lite from 1 source. How we write summaries →

IMPACT Suggests that post-training enhancements by developers can significantly boost AI agent performance, potentially impacting safety evaluations.

RANK_REASON The cluster describes a research paper evaluating AI agent capabilities and the impact of post-training enhancements.