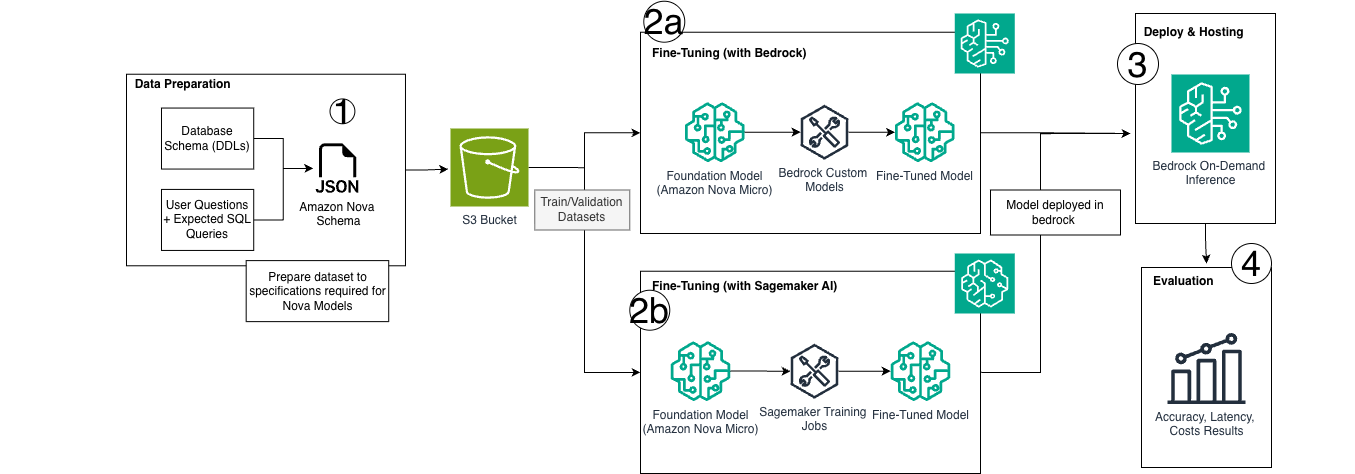

AWS has introduced a cost-effective method for creating custom Text-to-SQL models using Amazon Nova Micro and Amazon Bedrock's on-demand inference. This approach leverages LoRA fine-tuning with serverless, pay-per-token inference, eliminating the continuous costs associated with hosting dedicated models. The solution allows organizations to achieve production-grade accuracy for specialized SQL dialects without the overhead of persistent infrastructure, demonstrated by a sample workload costing only $0.80 monthly for 22,000 queries. AI

Summary written by gemini-2.5-flash-lite from 1 source. How we write summaries →

RANK_REASON This is a product announcement detailing a new capability for an existing service (Amazon Bedrock) and a specific model (Nova Micro) for a particular use case (Text-to-SQL).