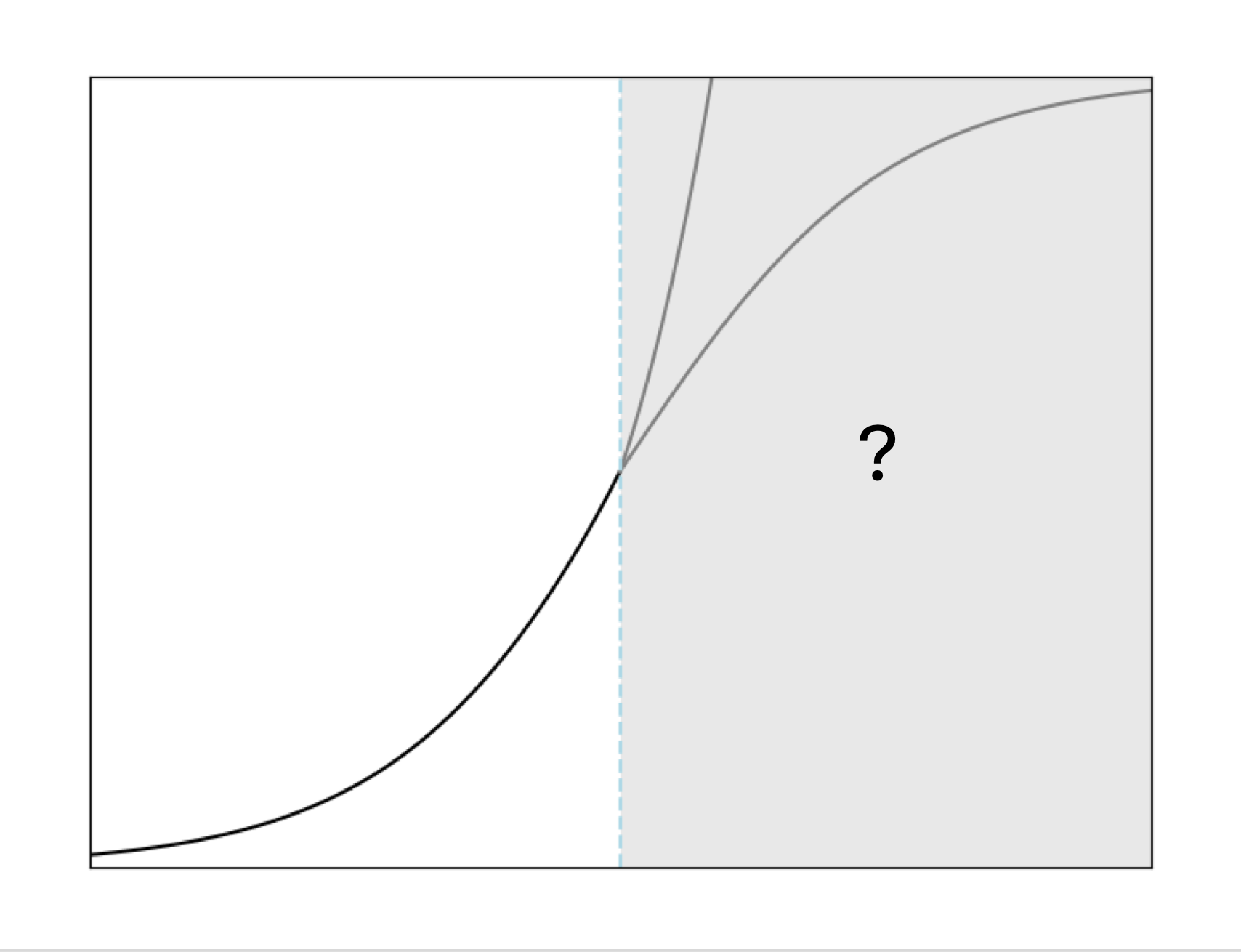

A recent analysis challenges the prevailing belief that continued scaling of AI models will inevitably lead to advanced capabilities like AGI. The author argues that the predictability observed in scaling laws primarily relates to reducing perplexity, not necessarily to the emergence of new, user-relevant abilities. Furthermore, the availability of high-quality training data is becoming a significant bottleneck, and the cost and potential backlash against data acquisition are increasing. AI

Summary written by gemini-2.5-flash-lite from 1 source. How we write summaries →

RANK_REASON The article presents an opinion piece by a named author that critiques common assumptions about AI scaling, fitting the 'commentary' bucket.