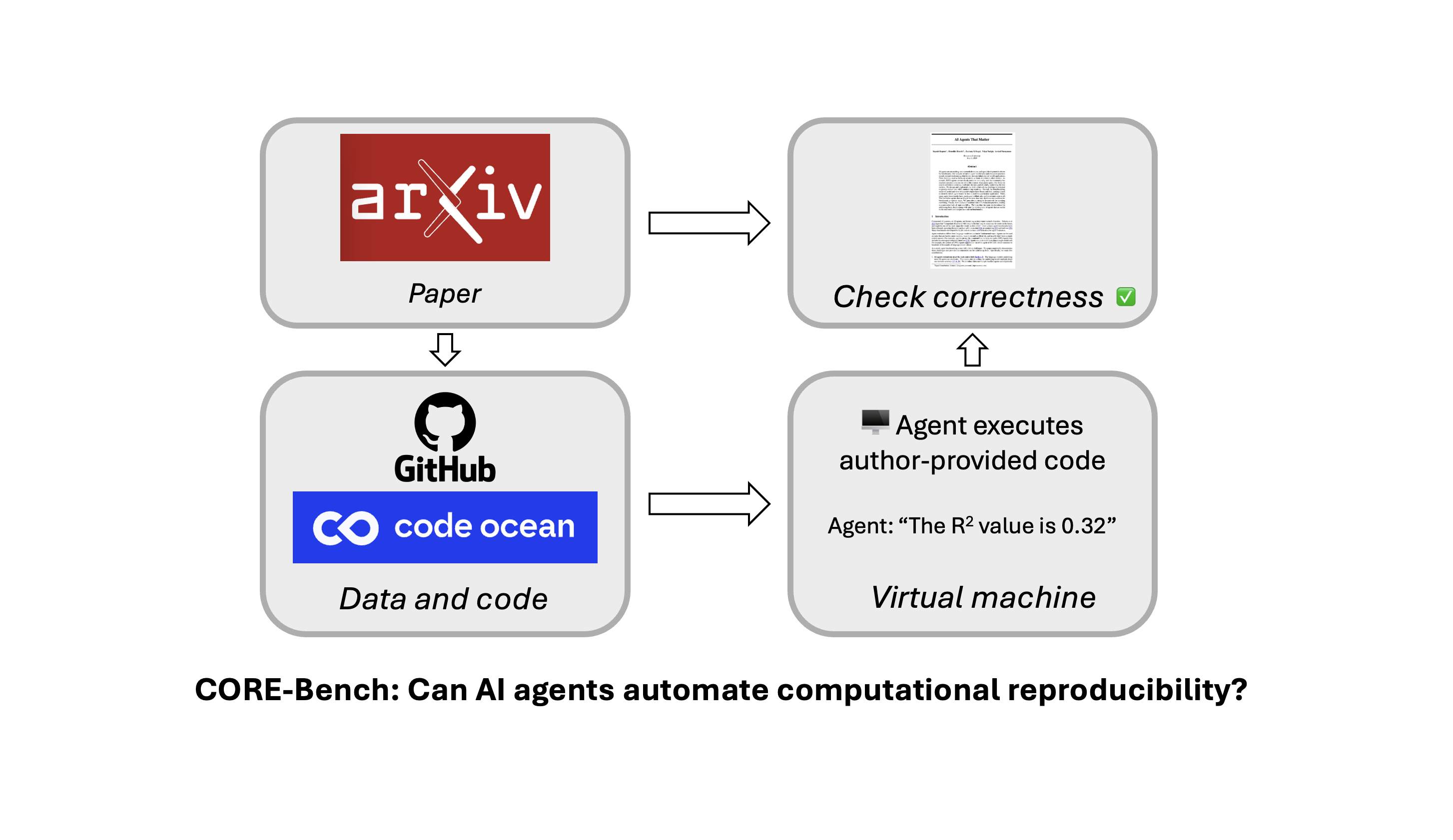

Researchers have developed AutoReproduce, a multi-agent framework designed to automatically reproduce AI experiments from research papers. This system utilizes a "paper lineage" to mine implicit knowledge from cited literature and employs a sampling-based unit testing strategy to ensure code executability. A new benchmark, CORE-Bench, has also been introduced to evaluate AI's capability in automating computational reproducibility. Initial tests show that while specialized agents like CORE-Agent with GPT-4o achieve 22% accuracy on difficult tasks, there is significant room for improvement in AI's ability to handle complex computational environments. AI

Summary written by gemini-2.5-flash-lite from 2 sources. How we write summaries →

RANK_REASON This cluster describes a new benchmark and framework for evaluating AI's ability to reproduce research, detailed in an arXiv paper.