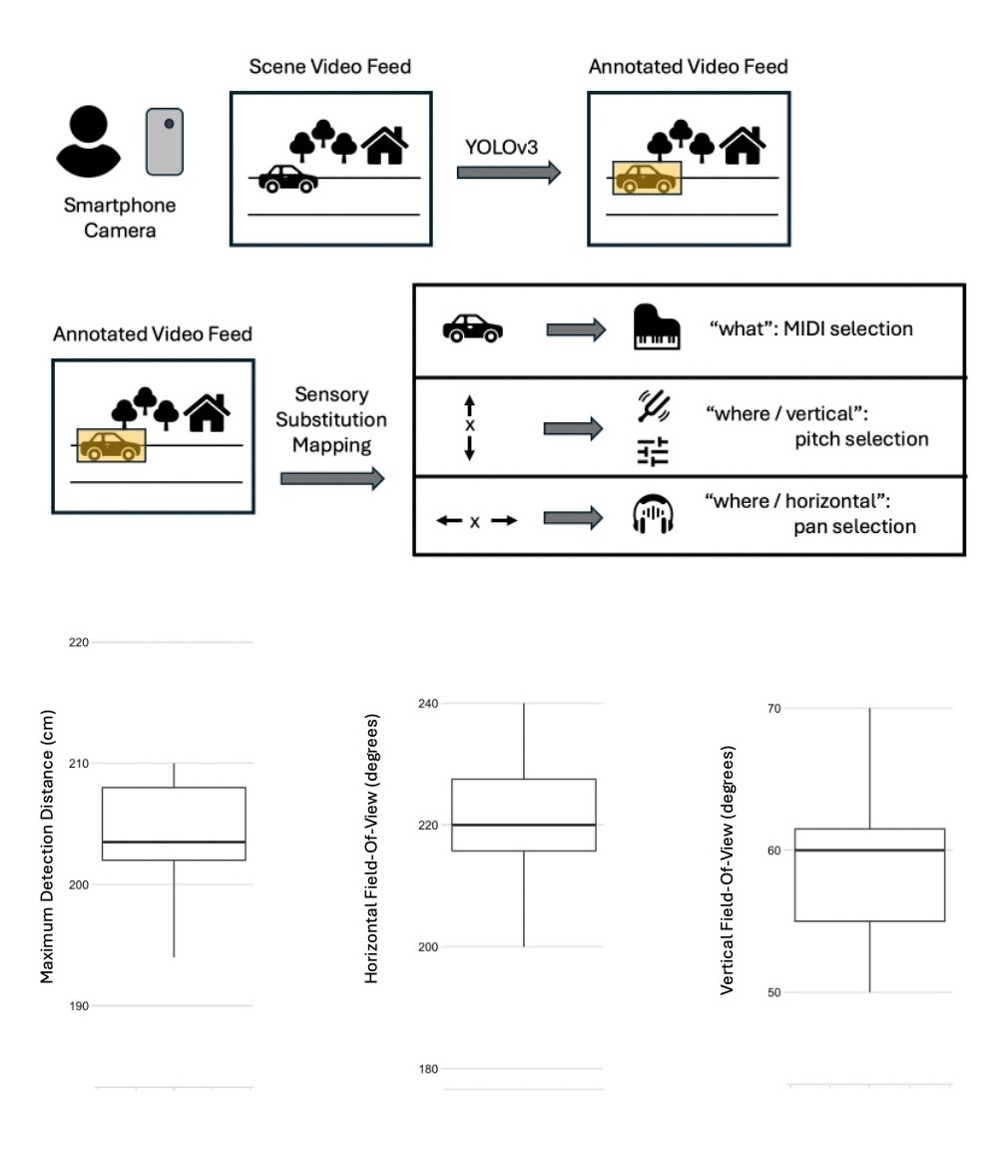

EchoSight is an open-source mobile application designed to provide real-time visual-to-audio sensory substitution. This framework aims to assist individuals, particularly those with blindness, by converting visual information into auditory signals. The project utilizes technologies like YOLOv3 for object detection and MIDI for audio output, and is associated with the ARVO 2026 conference. AI

Summary written by gemini-2.5-flash-lite from 3 sources. How we write summaries →

IMPACT Provides a novel sensory substitution tool for accessibility, potentially improving quality of life for visually impaired individuals.

RANK_REASON This is an open-source project release with a research conference association.