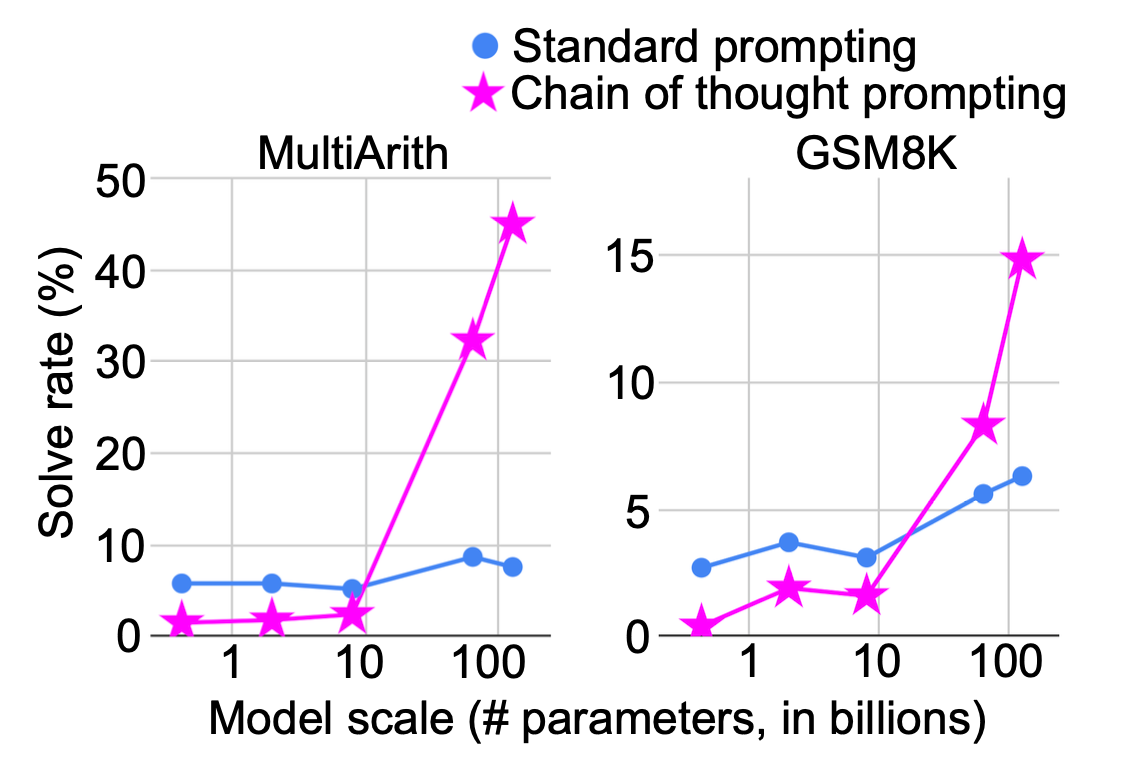

Lilian Weng's latest post explores the concept of "thinking time" or test-time computation in large language models. This approach draws an analogy to human cognition, where complex problems require deliberate, slow thinking (System 2) rather than immediate, intuitive responses (System 1). The post details how increasing computation at test time, such as through Chain-of-Thought prompting, allows models to perform more operations and potentially improve accuracy, especially for challenging tasks. Weng also frames this within latent variable modeling, suggesting that methods involving multiple reasoning paths can be viewed as sampling from a posterior distribution. AI

Summary written by gemini-2.5-flash-lite from 1 source. How we write summaries →

RANK_REASON Blog post by a credible researcher discussing AI concepts and research directions.