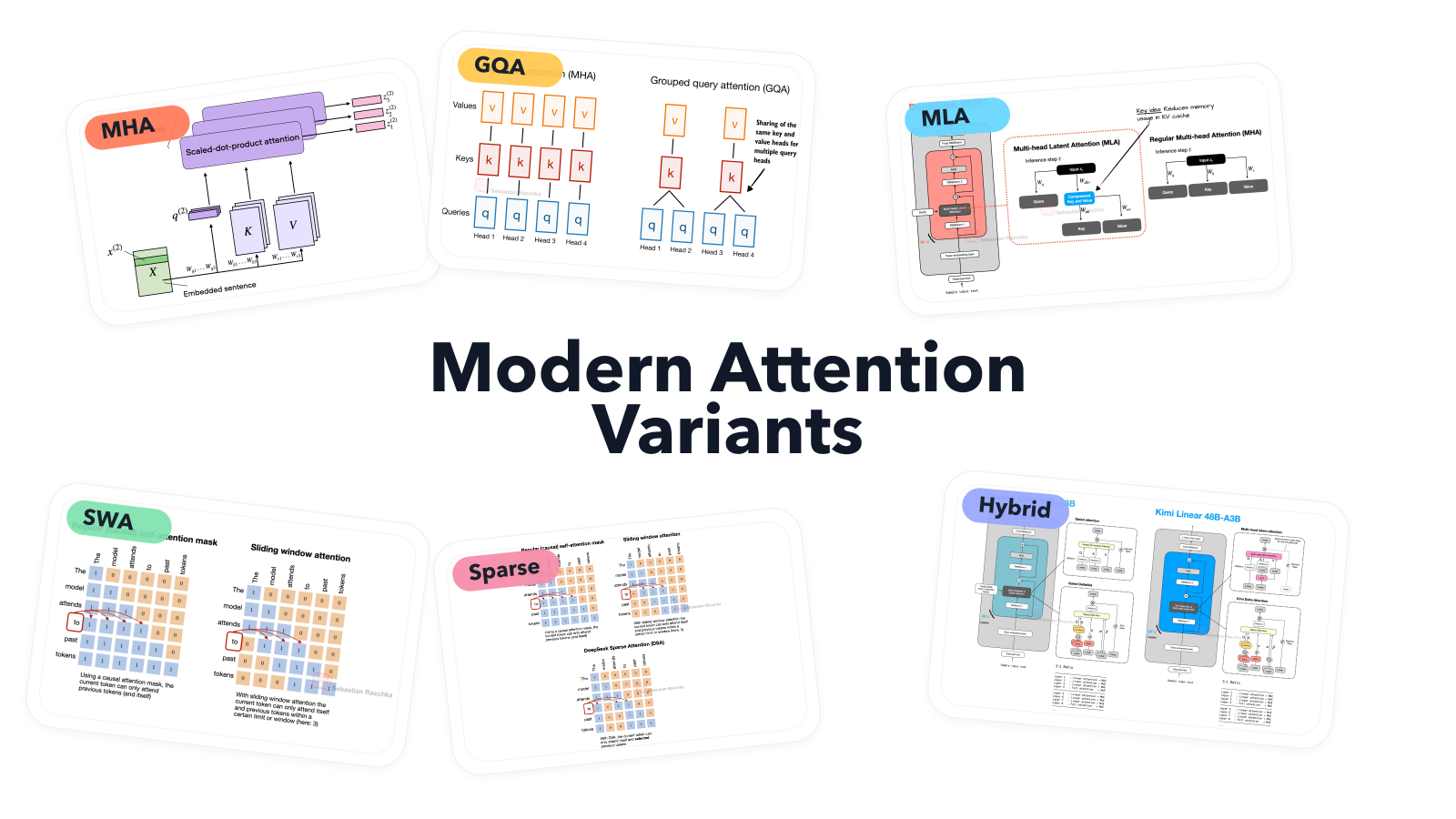

Sebastian Raschka has published a detailed visual guide exploring various attention mechanisms used in modern large language models. The guide, which includes 45 different architectures with visual model cards, serves as both a reference and a learning resource. It begins with an explanation of multi-head attention and its historical context, then delves into variants like grouped-query attention and sparse attention, referencing architectures such as GPT-2 and OLMo. AI

Summary written by gemini-2.5-flash-lite from 1 source. How we write summaries →

RANK_REASON The article is a detailed technical explanation and visual guide of LLM architectures, functioning as an educational resource and reference.