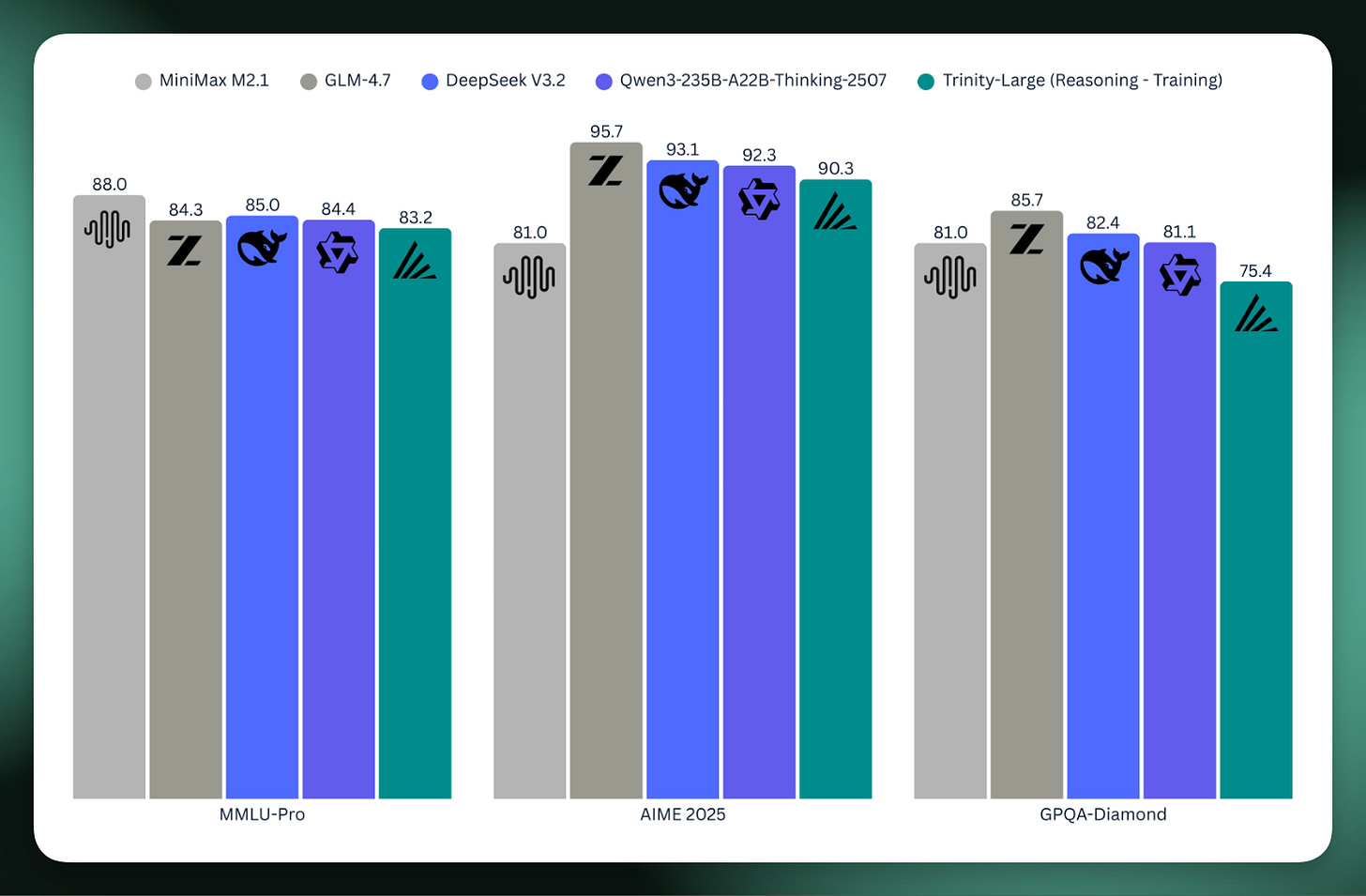

Arcee AI has released its flagship open-source model, Trinity Large, a 400 billion parameter Mixture-of-Experts model with 13 billion active parameters. This model was trained on 17 trillion tokens using Nvidia Blackwell B300 GPUs and represents a significant step for U.S.-based open model development. The company, focused on monetizing open models for on-premise deployments, completed this training run in six months for approximately $20 million. AI

Summary written by None from 1 source. How we write summaries →

RANK_REASON Release of a new, large-scale open-source model from a startup.